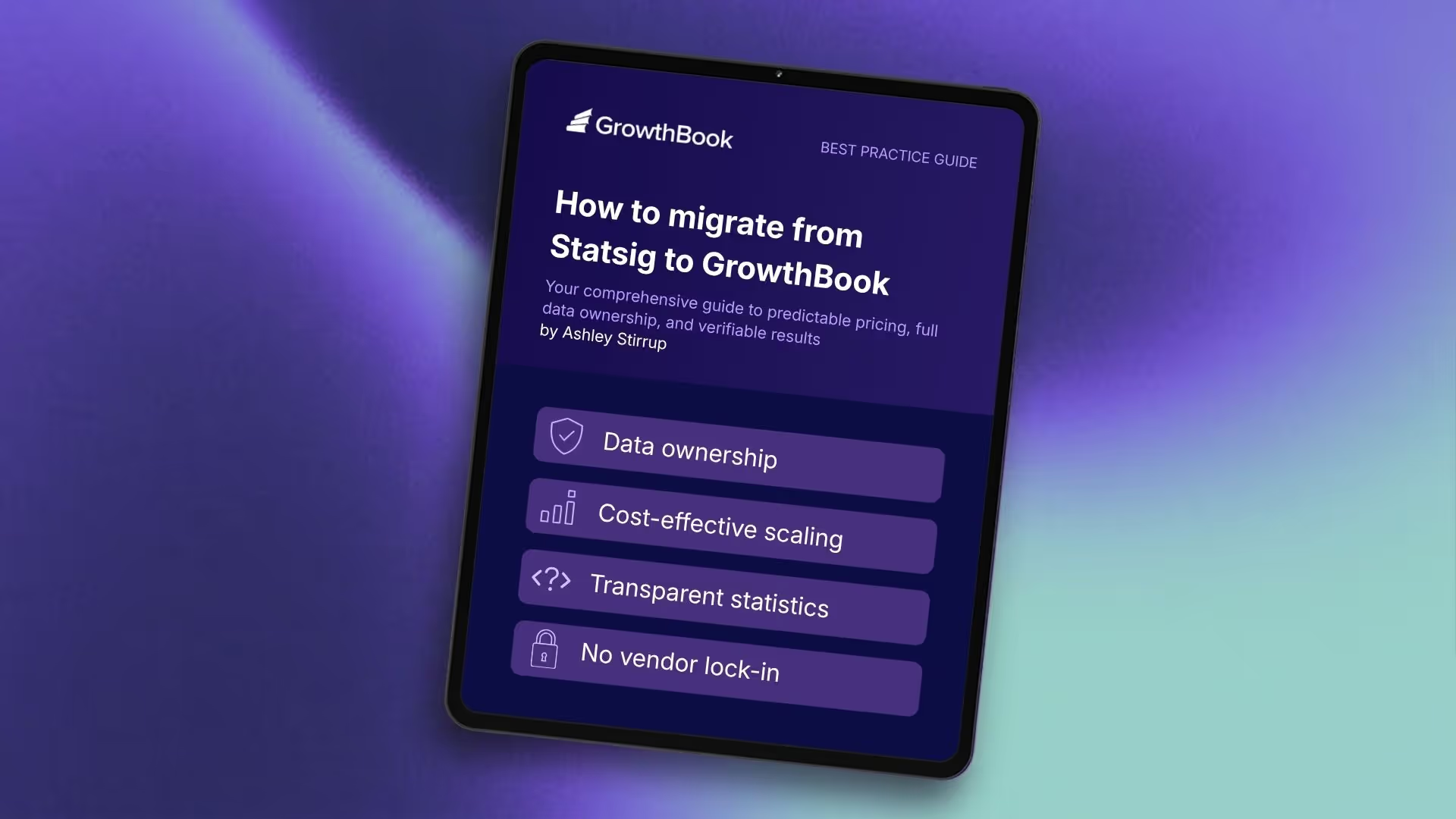

How to migrate from Statsig to GrowthBook

The industry's only open-source, warehouse-native experimentation platform gives you predictable pricing, full data ownership, and results you can verify. Here's why Statsig customers are switching to GrowthBook.

When OpenAI acquired Statsig, engineering leaders at hundreds of companies started asking the same question: what happens to our data?

It's a fair question. Statsig routes all event data through its own servers. With that infrastructure now under OpenAI's control — and Statsig's CEO gone — teams that cared about data governance found themselves re-evaluating a platform they'd built their experimentation programs on. Add event-based pricing that climbs as you scale, and the calculus tilts further.

GrowthBook is where many of them land. This post explains what GrowthBook offers, what the migration looks like in practice, and how to decide whether GrowthBook compared to Statsig makes sense for your team.

What is the GrowthBook open-source platform?

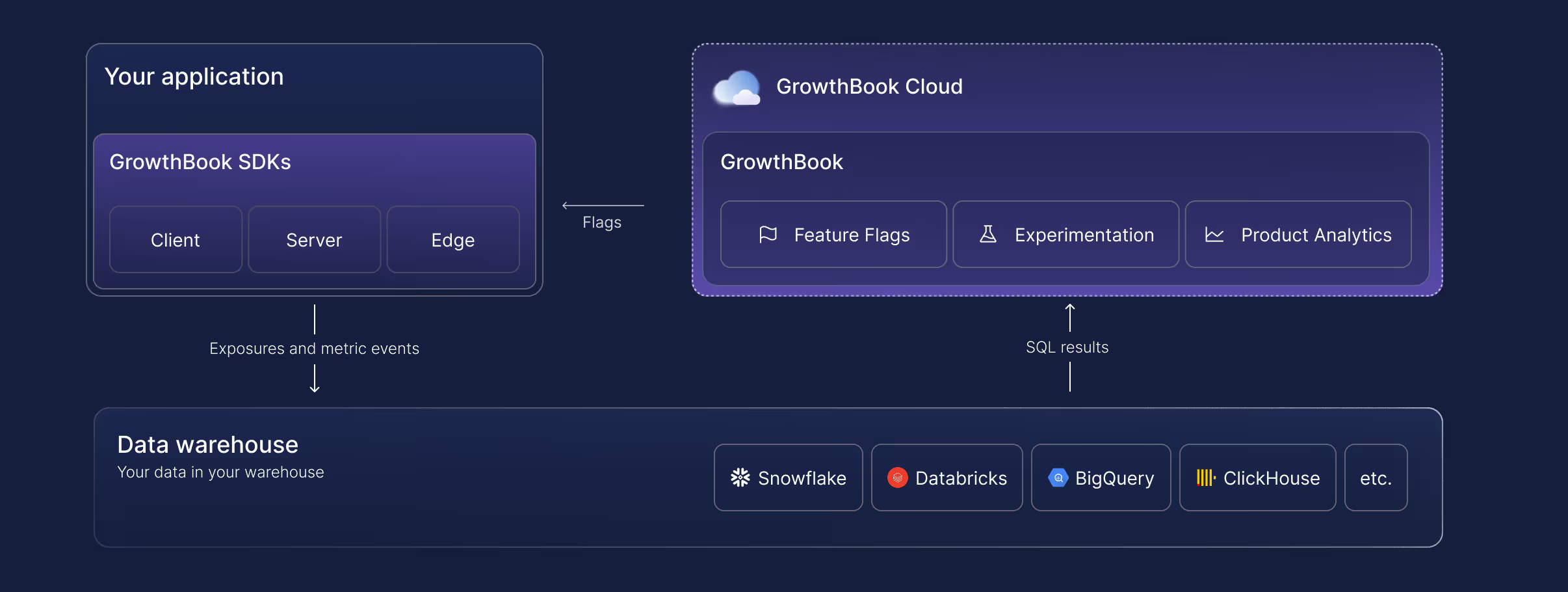

GrowthBook is an open-source feature flag, experimentation, and product analytics platform. It is the original warehouse-native platform, trusted by more than 3,000 companies, including Dropbox, Khan Academy, Upstart, Sony, and Wikipedia. It handles over 100 billion feature flag lookups per day.

The warehouse-native architecture is the defining design choice. Rather than copying your data into its own system, GrowthBook queries your data where it already lives — Snowflake, BigQuery, Databricks, Redshift, ClickHouse, Postgres, and more. Analysis runs in your warehouse with read-only access. Every SQL query is visible. Every result is reproducible.

That's the short version. The longer version explains why it matters when you're evaluating a replacement.

4 reasons teams switch from Statsig

1. Lack of data ownership

Statsig's architecture requires sending event data to Statsig's servers for analysis. That worked when Statsig was an independent company. Under OpenAI ownership, with no published data firewall policy between Statsig customer data and OpenAI's AI training, the risk profile changed.

GrowthBook inverts the model. Your data warehouse holds the data. GrowthBook reads aggregate statistics from it, using read-only credentials. Raw PII stays in your environment, under your control, and subject to your compliance policies. For teams operating under GDPR, HIPAA, CCPA, COPPA or daa residency requirements, this distinction is operational, not philosophical.

John Resig, Chief Software Architect at Khan Academy, described exactly this concern: the ability to retain data ownership was, in his words, "very, very important," because most platforms require passing user data to a third-party service.

GrowthBook's self-hosted deployment takes it further. Deploy within your own infrastructure, behind your own firewall, with zero external data egress. Fully air-gapped deployments are supported for the most sensitive environments.

2. Expensive to scale

Statsig prices on events and traffic. That structure makes sense when you're running a handful of experiments on modest traffic. At scale, it penalizes the behavior you want to encourage: more experiments, more feature flags, more coverage.

Teams using Statsig often end up managing their experimentation volume to manage their bill — sampling down traffic, avoiding flagging minor changes, skipping experiments on low-stakes features. That's the opposite of a healthy experimentation culture.

GrowthBook uses per-seat pricing. A team that runs 10 experiments a month pays the same as one running 100. Feature flag evaluations don't generate a cost event. The experimentation ROI calculator can model your specific usage to show expected savings.

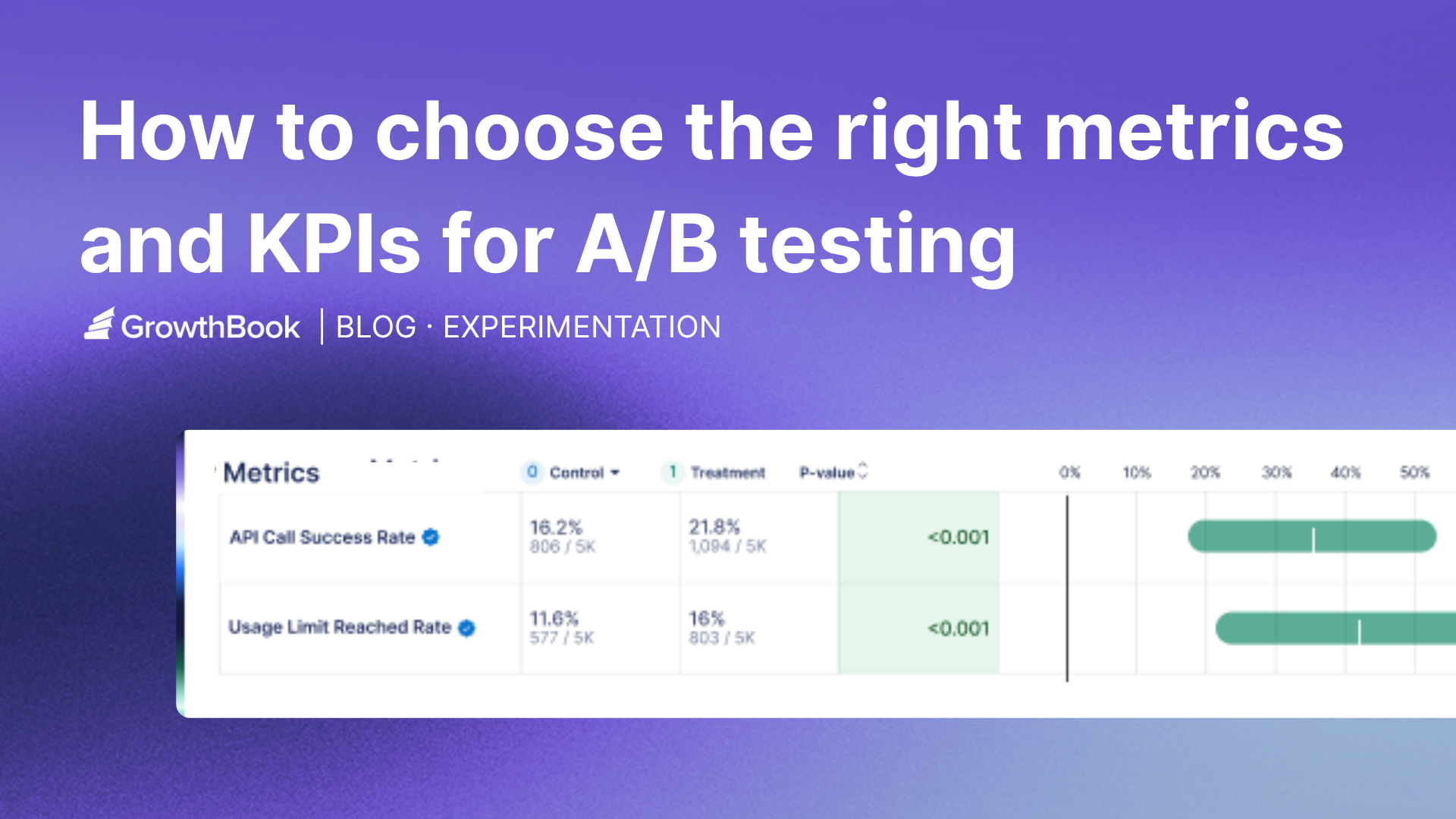

3. Limited visibility into underlying results

Statsig's statistics engine is proprietary. You can see the outputs, but not the logic that produced them. When a result is surprising, your options for investigation are limited to the interfaces Statsig exposes.

GrowthBook's engine is fully open source on GitHub (7,000+ stars). Every calculation is inspectable. Every query is visible in your warehouse. If a result looks off, you can drill into the underlying SQL, check the raw data, and confirm or refute the calculation on your own terms.

Diego Accame, Director of Engineering at Upstart, put it this way: "Our strength is as an AI-powered lending marketplace, not an experimentation framework company. GrowthBook lets us focus our resources where they matter most — on growing our core business."

That confidence comes partly from owning the infrastructure and partly from being able to verify what the infrastructure is doing.

4. Limited statistical depth

GrowthBook supports Bayesian, frequentist, and sequential testing, with CUPED variance reduction and post-stratification. Statsig supports a similar range, but without post-stratification and the ability to inspect or reproduce the calculations.

For data science teams that care about methodology — particularly at companies where an experiment result drives a significant product or business decision — the ability to validate the math is a meaningful advantage.

What the Statsig migration kit covers

GrowthBook ships a migration kit specifically for Statsig customers, including an AI-powered assistant that can transform your existing codebase. Here's what migrates:

Projects, teams, and tags carry over cleanly, preserving your workspace organization so teams can keep working without rebuilding their context.

Feature gates from Statsig map to GrowthBook feature flags, which support multiple environments, targeting rules, gradual rollouts, and instant kill switches.

SDKs migrate automatically. The AI migration assistant points at your codebase and handles the transformation — feature gates, dynamic configs, and user attributes converted to GrowthBook equivalents. JavaScript, TypeScript, and React are supported today, with more coming.

This is the step that usually takes weeks; the assistant reduces it to minutes.

Experiments transfer, including past experiments run on Statsig. You can generate custom reports from past Statsig experiments in GrowthBook, which preserves institutional knowledge.

Targeting rules transfer with full visibility into conditions and rollouts. GrowthBook includes debugging tools that simulate flag values for specific audiences, making it straightforward to verify that migration behavior matches pre-migration behavior.

Safe rollouts remain a first-class concept. GrowthBook supports gradual exposure with automatic monitoring of guardrail metrics, so regressions trigger alerts before they reach your full user base.

The SDKs themselves don't require replacement during migration. If you're moving from Statsig cloud to GrowthBook cloud, or from Statsig to self-hosted GrowthBook, your feature flag configuration and experiment setup carry over without requiring SDK changes or redeployment of your application code.

The full GrowthBook platform you're migrating to

Migration is the starting line, not the finish line. Here's what GrowthBook offers beyond Statsig feature parity.

Feature flagging that doesn't cost per evaluation

GrowthBook's feature flags run through zero-network-call SDKs. The SDK downloads a payload at startup and evaluates flags locally, so each flag evaluation adds sub-millisecond latency without generating a billable event. You can flag every feature in your product — including low-traffic, experimental, and internal-use features — without worrying about cost.

GrowthBook supports 24+ SDKs: JavaScript, React, React Native, Node.js, Python, Ruby, Go, PHP, Java, Kotlin, Swift, and more. The Chrome debugger lets you inspect flag state and experiment assignment in real time without touching application code.

Experimentation with SQL you write and own

GrowthBook's metric system is SQL-first. You write metrics using your warehouse's SQL dialect, join against any tables in your schema, and apply whatever business logic your team uses. A metric for revenue per activated user might join your experiment assignment table to your payments table to your activation events — all using the same logic your data team uses everywhere else.

Forgot to add a metric before an experiment started? Add it retroactively. The data is already in your warehouse. Just define the metric and run the analysis against the historical assignment data.

Metrics can be standardized in a library, enabling every team to measure success consistently. They can be scoped to specific experiments or applied globally as guardrails.

Deployment on your terms

GrowthBook Cloud runs on AWS with automatic updates, encrypted data at rest and in transit, 99.99% uptime SLA at Enterprise tier, and SOC 2 Type II, ISO 27001, GDPR, COPPA, and CCPA compliance.

GrowthBook Self-Hosted runs on your infrastructure, choose any major cloud provider or on-premises, deployed with Kubernetes or any container platform. Same codebase. Same features. Same development roadmap. The only difference is who manages the infrastructure.

Many teams start on GrowthBook Cloud for the fastest path to running experiments, then migrate to self-hosted when compliance requirements or internal policy require it. GrowthBook's SDK and configuration structure don't change in that migration, so the transition preserves everything you've built.

If you don't have a data warehouse yet, GrowthBook's Managed Warehouse gives you a fully functional environment immediately, with the option to migrate to your own warehouse at any time.

AI-ready experimentation

Three of the five leading AI infrastructure companies use GrowthBook to test and optimize their products. The platform handles the non-deterministic, high-variance nature of AI feature testing well.

- Sequential testing reduces false positives

- CUPED variance reduction accelerates decision-making

- Fully custom SQL metrics capture what matters for AI outputs (task completion, output acceptance, engagement depth) rather than just clicks

GrowthBook's MCP server connects to Cursor, VS Code, Claude Code, and any other MCP-compatible IDE. Create feature flags and experiments in natural language, query past results, and build agents with your experimentation data as context — all without leaving your editor.

Getting started for free

GrowthBook's offer for current Statsig customers: use GrowthBook for free through your current renewal date, up to one year (up to $100,000 value). The migration kit, including the AI-powered SDK migration assistant, is available immediately.

The practical starting path for most teams:

- Connect GrowthBook to your data warehouse. Pre-built SQL templates get you to first results without custom data engineering. Customize from there.

- Run the AI migration assistant against your codebase. It transforms Statsig feature gates to GrowthBook equivalents and generates a diff for your team to review.

- Import your Statsig experiments. Historical results carry over so you don't lose the record of what you've learned.

- Start your first GrowthBook experiment. The Chrome debugger and visual editor make the first experiment accessible to non-engineers.

The decision to switch to GrowthBook

If your team depends on Statsig and the OpenAI acquisition raises questions you can't yet get answered – about data governance, roadmap continuity, or long-term pricing - then GrowthBook is the switch that costs the least to evaluate and offers the most structural independence.

Open source lets you inspect and audit what you're running. Warehouse-native architecture gives you data ownership that doesn't depend on a vendor relationship. Per-seat pricing gives you the freedom to run more experiments without watching a meter.

The migration kit makes the practical barriers manageable. The question is whether the reasons to switch outweigh the friction of switching. For most Statsig customers evaluating the post-acquisition landscape, that math is becoming clearer.

Ready to get started?

Read the GrowthBook vs. Statsig comparison →

Related articles

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics — free.