Ship AI software with confidence

GrowthBook high velocity experimentation, feature flag controls, and product analytics help development teams get AI innovations to market faster.

Trusted by 3,000+ companies worldwide

Ready to scale and customize for AI

Trusted by leading AI companies for modern product development, GrowthBook helps teams iterate with confidence and control AI rollouts.

Reduce risk with control and kill switches

Automatically wrap any block of code with a GrowthBook feature flag and you have instant kill switches, progressive rollouts, and targeted releases. Roll out to 1% of users, monitor metrics, and scale up with confidence.

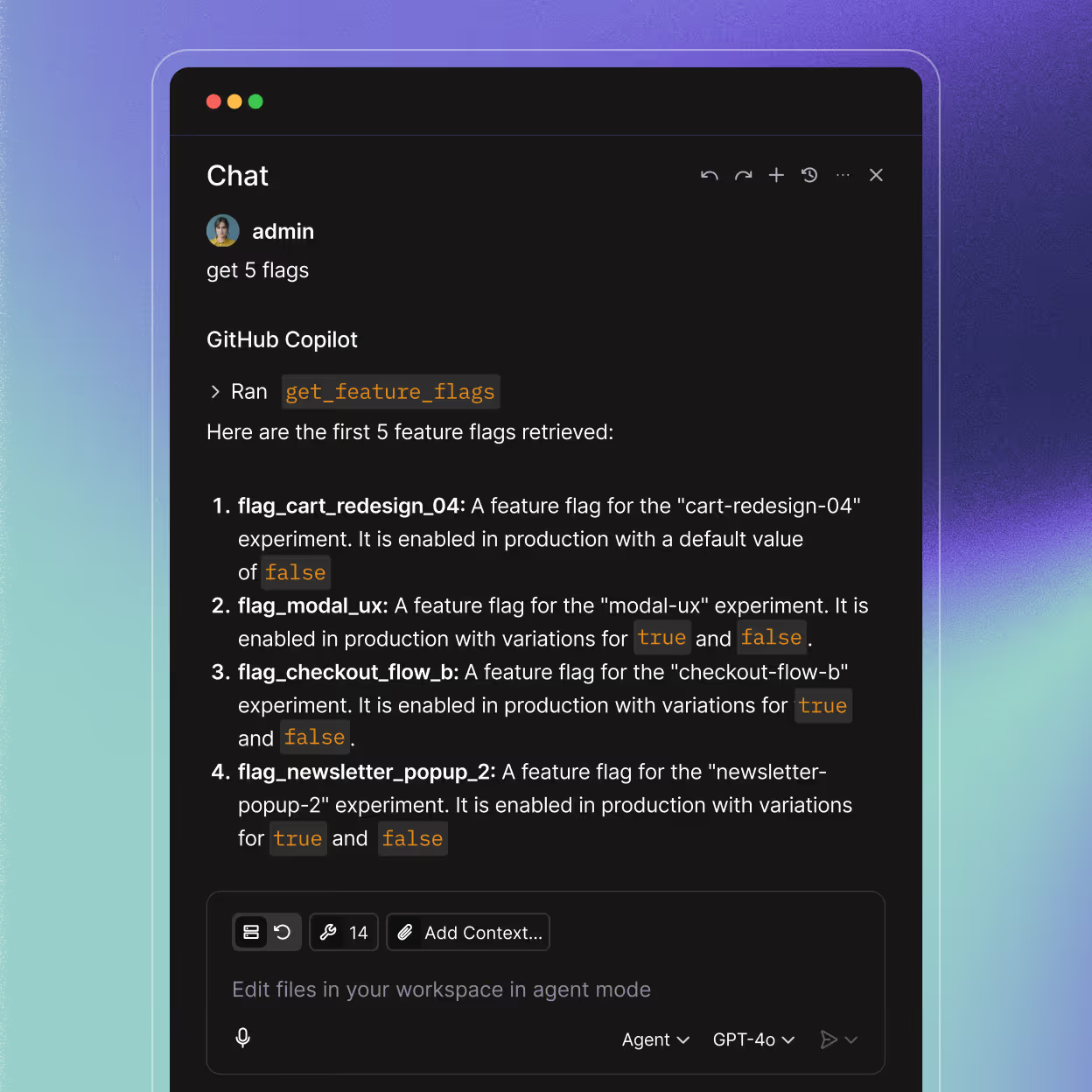

Evaluate AI models, agents, and prompts

Run controlled experiments with GrowthBook to iterate quickly on prompts and agents. Optimize for performance, latency, cost, and user satisfaction based on real user outcomes. Use the metrics that matter most to your product.

Measure everything over time

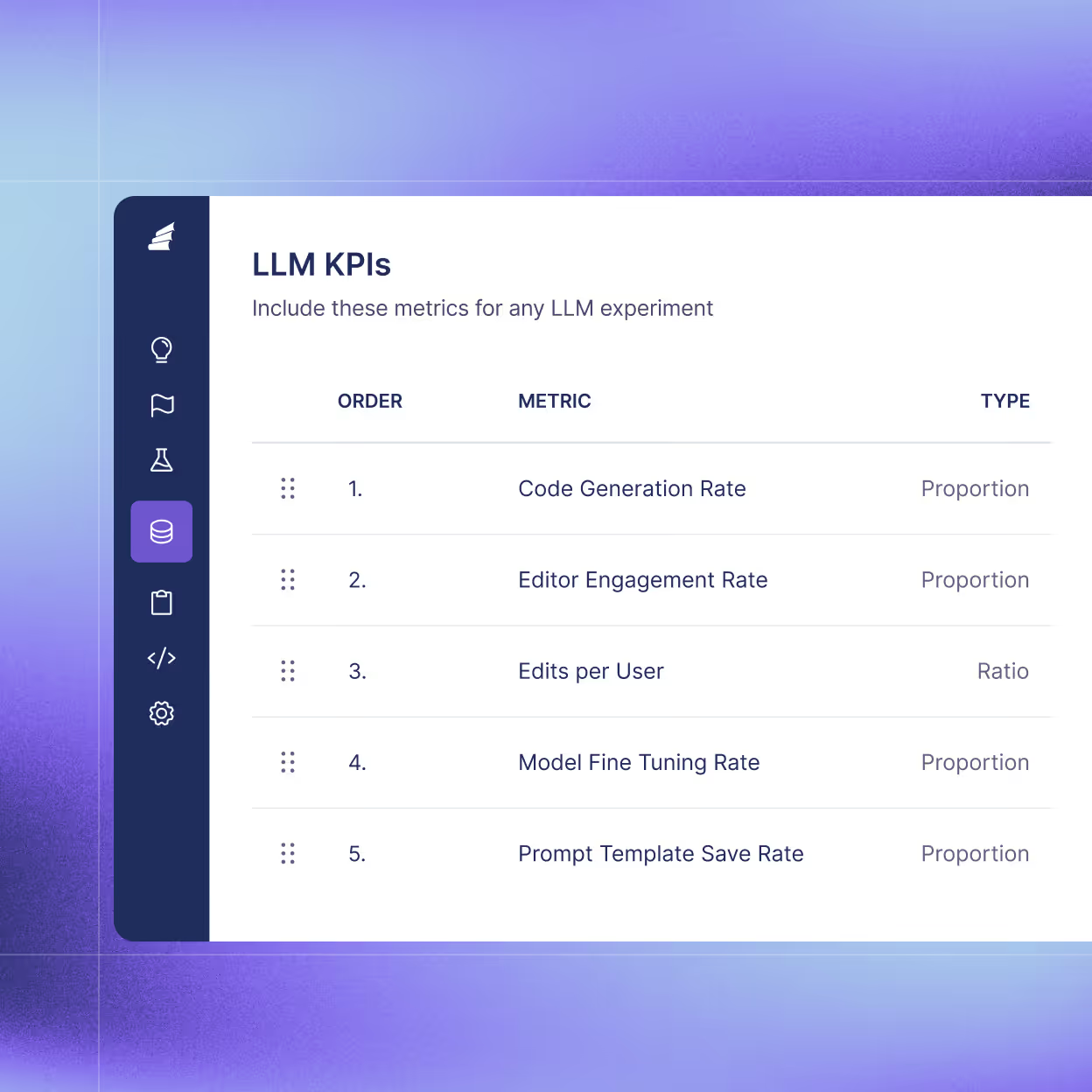

Only 20% of product changes have a positive impact on core business metrics. Small changes can have an outsized impact. Optimize the user experience and revenue, using success rate, engagement, repeat usage, or custom metrics. Experiment on everything and track cumulative impact with insights.

Get started in minutes

Get up and running in minutes with feature flags, A/B testing, and product analytics. Start using GrowthBook for free with a managed warehouse. No pipelines required, no infrastructure decisions. See the SQL behind any calculation and verify every result.

Enterprise-grade security and controls

GrowthBook fits your risk profile and compliance requirements without slowing you down or complicating your setup.

Create a culture of experimentation

For Dev Teams

Ship faster with confidence, rollback instantly.

For Data Teams

Powerful stats, full control, trusted results.

For Product Teams

Run 5x more experiments, prove impact faster.

GrowthBook open-source platform

GrowthBook’s modular design works on top of what you have, or replaces what’s not working.

FAQs

AI features are harder to release safely than traditional features because the outputs are non-deterministic. The same prompt can produce wildly different results across user types, edge cases, and traffic volumes. You can't fully validate that in staging. The safest approach is to treat your release as an ongoing experiment, not a single deployment event. Use feature flags to rollback, if needed.

AI outputs don’t produce clean success/fail signals. “Did the AI response help the user?” can’t be measured like a button click. Start by defining metrics based on behavior: task completion, output acceptance, engagement depth. AI output quality gets noisy with high variance across users, sessions, and input types. Sequential testing (check results without inflating false positive rates) and CUPED variance reduction (using pre-experiment data to reduce noise) can help you make decisions more quickly.

Yes. Run controlled experiments comparing GPT, Claude, Gemini, or other models. Measure user satisfaction, latency, cost, and any custom metrics that matter to your product.

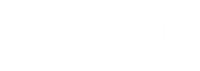

Yes. The GrowthBook MCP server connects to Cursor, VS Code, Claude, or any MCP-compatible tool. Create feature flags and experiments in natural language, query past results, and build agents with your experimentation data as context. All without leaving your editor.

Yes. 3 of the 5 leading AI infrastructure companies use GrowthBook for their experimentation. Khan Academy, Upstart, and other AI-forward companies use GrowthBook for model comparison and feature experimentation. GrowthBook is an open-source platform with 7,000+ GitHub stars, and is SOC 2 certified.

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics—free.