Chasing Velocity in A/B Testing: Why More Experiments Can Mean Less Learning

TL;DR: Experiment velocity is a useful diagnostic, not a goal. The moment you make it a KPI, teams start optimizing for the count instead of the learning, and the program quietly drifts toward trivial tests that answer trivial questions. The healthier target is the rate at which you learn, which usually requires a portfolio of easy, medium, and hard experiments.

There is a particular kind of dysfunction that only shows up in experimentation programs that are working well. The team has the tooling. The events and data pipelines are working, metrics and results are trustworthy. Experiments ship every week. By every visible measure, the program is mature. But the experiments themselves keep getting smaller, the hypotheses keep getting safer, and the insights keep getting shallower. The program is producing more experiments than ever and somehow learning less. This is what happens when velocity stops being a diagnostic and starts being the goal.

It is a quiet failure mode, which is part of why it persists. Nothing breaks. No one misses a deadline, and the dashboards still tell a flattering story. The shift from "what is the most valuable thing to learn?" to "what is the fastest thing we can test?" happens one prioritization meeting at a time, and by the time anyone notices, it has become the way the team works. The trap is not that velocity is a bad thing to care about. It is that velocity, treated as the main metric, will reliably get you a program that runs more experiments and understands its users less.

Why experiment velocity became the main metric

Experimentation maturity is usually described in terms of throughput. The popular crawl, walk, run framework puts a handful of experiments at one end and thousands at the other, with every feature change running as an A/B test at the top tier. It is easy to extrapolate: if the most advanced teams run the most experiments, then running more experiments must be how you become advanced.

Teams that hit the “fly” level have done a lot right. They launch features behind a feature flag. They have the data piped so the marginal cost of an additional experiment is close to zero. They trust their experimentation platform and know how to interpret results. Culturally, they are more comfortable learning from users than arguing in planning meetings. Throughput matters.

If your team can only launch one experiment a quarter, you have a velocity problem. But it does not follow that maximizing the number of experiments is the right objective forever. That is where teams get into trouble.

The problem with velocity as a KPI

Velocity is a proxy for the real goal, which is learning what your users want from your product. A team running a lot of experiments looks like a team asking lots of questions, challenging assumptions, and replacing opinion with evidence. More tests, more learning. The logic is not wrong. It is just incomplete.

The moment a proxy becomes a target, people optimize for the measurement instead of the thing the measurement was supposed to represent. This is Goodhart’s law. When a measure becomes a target, it stops being a good measure.

Experiment velocity is a textbook example. If leaders say “we want to learn faster,” teams build better systems, reduce friction, and ask sharper questions. If leaders say “we need to run more experiments this quarter,” the behavior changes. Teams look for the fastest path to a count, not the fastest path to insight.

That makes the metric easy to game. A team can inflate velocity by prioritizing simple tests over difficult, high-value ones, or by launching half-formed tests just to show they are testing. From the dashboard, this looks like progress. In reality, the organization may be learning less.

The irony is that this produces the opposite of what leaders wanted. The original goal was faster learning and a better-performing product. Setting velocity as the headline KPI lowers the quality of that learning. Teams become more active but less ambitious. They generate fewer durable insights. The program optimizes for motion rather than understanding.

The hidden cost is shallower learning

Trivial experiments answer trivial questions. A button-color test tells you which variant got more clicks. It rarely tells you anything about your users, their motivations, or the constraints in your product.

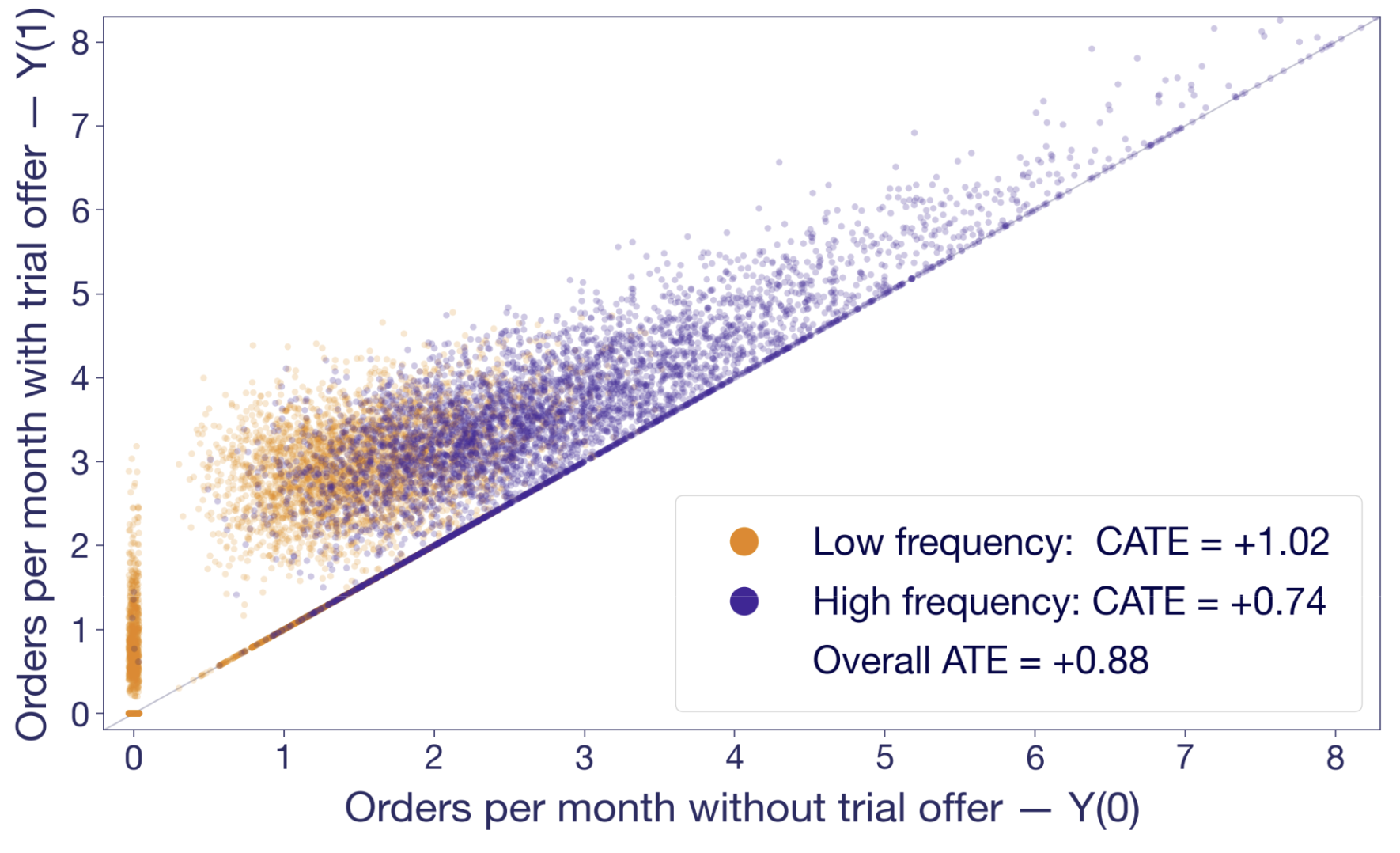

A harder experiment often teaches something more generalizable. Suppose you move the paywall later in onboarding, and conversion to paid goes up. That result is not just about one UI sequence. It challenges an institutional belief about when users are ready for purchasing. It suggests users need more confidence before being asked to commit. It can influence pricing, packaging, and other related projects.

That is a qualitatively different kind of learning. The best experimentation programs do not just produce winners. They produce insight. They learn from experiments that fail and use those learnings to drive future iterations. They help an organization understand which assumptions were wrong. Chasing easy velocity sacrifices that deeper layer.

Think like a portfolio manager

The fix is not to reject velocity. It is to stop treating velocity as the only mark of success. A healthy experimentation program runs a portfolio.

Some experiments should be fast and cheap. These iterative tests improve local conversion points, clarify messaging, and tune workflows. They keep momentum high and help teams build instincts.

Some should be medium-scope bets. A bigger design change, a more consequential workflow adjustment, a new targeting rule.

Some should be hard. These force you to instrument something new, redesign a key system, or challenge an assumption that has been sitting untouched for years.

If your portfolio only contains the first category, you are under-reaching. If it only contains the third, you are overloading the system. The right balance depends on team maturity, traffic, engineering capacity, and risk tolerance. But balance is the point. Not every experiment should be bold. Not every experiment should be easy.

What to measure instead of raw velocity

If you want teams to behave differently, measure differently. Velocity should still be measured, as it is a useful operational signal, but it should be used alongside other, harder to quantify measures:

• How many strategically important surfaces are actually being tested?

• What percentage of experiments target known bottlenecks in activation, retention, or monetization?

• Did the experiment teach us something reusable, even if the variant did not win?

• Are hypotheses clear and well-structured? Are they trying to understand user behaviour?

• Did the team define guardrails and power the test appropriately?

Experiment quality is not about launching tests. It is about designing them well enough that the result is trustworthy and useful.

Questions for experiment review

One practical fix is to change the review conversation. Instead of asking only “how many experiments did we run,” ask:

• What important question did this experiment answer?

• What assumption was being challenged?

• What did we learn that changes future roadmap decisions?

• What meaningful experiment are we currently avoiding because it is hard?

That last question is especially useful. Every mature product org has a backlog of experiments it is quietly avoiding. They are hard to instrument. They cross team boundaries. They touch pricing, relevance, or onboarding logic and feel risky. Those are often exactly the areas leaders should pay attention to.

Conclusion: Velocity matters, but it is not the goal

The mission is to increase the rate at which your organization learns important truths about users, products, and business tradeoffs.

Sometimes that means running more experiments because your current process is too slow. Sometimes it means resisting the urge to inflate the count and putting real effort into the experiments that are harder, riskier, and more consequential.

A mature experimentation culture does not ask only “how can we run more tests?” It asks, “how can we run more of the right tests?” That is a much better optimization target. If you are building toward that kind of program, GrowthBook gives you the feature flagging, metric definitions, and analysis layer to make harder experiments easier to run.

Related Articles

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics—free.

.png)