The Uplift Blog

Guide to Multi-Arm Bandits: What is it, and when you should use them

Multi-Arm Bandit testing comes from the Multi-Arm Bandit problem in mathematics. The problem posed is as follows: given a limited amount of resources, what is the best way to maximize returns when gambling on one-armed bandit machines with different rates of return? This is often phrased as a choice between “exploitation,” or maximizing return, and “exploration,” or maximizing information. When applied to A/B testing it can be a valuable tool. In this article I’ll go into more details as to what multi-arm bandit testing is, how it compares to A/B testing, and some of the reasons you should, and should not, use it.

What is Multi-Arm Bandit testing?

Imagine you walk into a casino and you see three slot machines. In this hypothetical casino, there is no gaming control board to guarantee that each machine has the same return. In fact, you’re fairly certain that one of the machines has a higher rate of return than the others. How do you figure out which machine that is? One strategy is to try to figure out the return on each machine before spending the majority of your money. You could put the same amount of money into each machine and compare the returns. Once you’ve finished, you’ll have a good idea of which machine has the highest return, and you can spend the rest of your money (assuming you have any) on it. But while you were testing, you put a fair amount of money into machines that have a lower return rate. You have maximized certainty at the expense of maximizing revenue. This is analogous to A/B testing.

There is, however, a way to maximize revenue at the expense of certainty. Imagine you put a small amount of money in each machine and looked at the return. The machine(s) that returned the most, you place more money into the next round. Machines that return less, you put less money into. Keep repeating and adjusting how much money you’re putting in each until you are fairly certain which machine has the highest return (or until you’re out of money). In this way, you’ve maximized revenue at the expense of statistical certainty for each machine. You have avoided spending too much money on machines that don’t have good returns.

This is the premise behind Multi-Arm Bandit (MAB) testing. Simply put, MAB is an experimental optimization technique in which traffic is continuously and dynamically allocated based on the degree to which each variation outperforms the others. The idea is to send the majority of traffic to variations that perform well, and less to those that perform poorly. What MAB guarantees is that, unlike an A/B test, during the course of the test, the total number of conversions will be maximized. However, because the focus is on optimizing conversions as a whole (inclusive of all variations), you lose the specifics of each.

The main advantages are that you can avoid sending traffic to test cases that aren’t working, optimize page performance as you test, and introduce new test cases at any time. Sounds great, right? However, this kind of testing is not ideal for all situations. Let’s take a look at situations where MAB is appropriate, and where it isn’t.

Problems with Multi-Arm Bandit testing

Multiple metrics and unclear success metrics

If you are using multiple metrics for the success of your experiment, MAB is usually not the right test. If a variation has one metric that increases, and another that decreases, how do you know how much traffic to send to it? Even if you do have one main metric you’re using to determine success, you’ll want to also include your guardrail metrics (metrics that you include in all tests to make sure you’re not doing something bad, like cannibalizing traffic or increasing page load time, which might hurt SEO in the long term). Often, tests require some interpretation to determine which variation you want to go with. You might even decide you’re okay with one metric losing because of some longer-term gain in mind. With MAB, you’re handing over the decision-making to the algorithm.

Variable conversion rates

Multi-Arm Bandit testing assumes that conversion rates are constant over time. For example, if one cohort of your traffic converts better on the weekends, but not so well during the week, the variation that gets the most traffic will depend on when you start the test. This will either cause a false positive or a seesawing of the traffic to variations. You can avoid this by making sure your algorithm for allocating traffic takes these time variations into account — assuming you know what they are.

Conversion windows

Conversion windows pose another problem. The usual algorithm expects conversion events to happen fairly immediately after the user sees a variation; after all, it’s using these conversions to allocate traffic. If there is a delay between a user seeing a variation and the conversion event, it could cause the MAB algorithm to distribute traffic incorrectly.

Simpson’s Paradox

Because with MAB you’re not exactly sure why a variation is better than the others, and you don’t have detailed statistics on the performance of each variation, you open yourself up to the Simpson’s Paradox. The Simpson’s Paradox, as it applies to A/B testing, is an interesting one, and probably a topic of another article. Simply put, Simpson’s Paradox with testing can result in one variation winning on aggregate, but actually losing if the traffic is segmented differently. Since you don’t have the detailed statistics to dig into, you may be more likely to fall into this situation.

Time and complexity

Setting up MAB tests tends to be more complicated than A/B tests. This complexity increases the error rates. You’ll need to have a lot of trust in your algorithms to make decisions based on the results they produce. MAB tests also take longer to decide on a winner which will get all the traffic, and you may not want to wait that long.

When to use Multi-Arm Bandits

Exploratory tests

The one situation where MAB testing is very useful is for exploratory tests without a real hypothesis — like Google’s 41 shades of blue test. Google employees couldn’t agree on which color of blue would be best. They initially chose a compromise color, but then tested to determine which colors led users to click more. They didn’t really have an idea of which shade would be best, but they thought there might be a difference, so why not test it. [I do not believe Google used a Bandit for that specific test, but this kind of optimization test aligns well with its use. They claim it resulted in an additional $200M in annual revenue. I believe the successful blue was #1A0DAB.]

Independent tests

If you can select one metric, and you are certain that the metric in the area you’re testing is independent of other metrics, MAB testing can work out well. However, this situation is rare. Metrics of user interactions tend to be highly interdependent and hard to isolate. One example of where you might find independent variables is in search marketing landing pages, where the traffic has not experienced your product before, and you can shape their experience without affecting other traffic or flows.

In a rush

There are situations where you want to deliver the best experience to your users but don’t have time to run a full A/B test, or the context is temporary, such as a promotion or a topical event. In these cases, maximizing your conversions is more important than being statistically perfect. You might consider adding all variations into a bandit and letting it run. You can also add and adjust variations as often as you like and let the algorithm adjust the traffic to the best version.

Contextual Bandits: MAB with personalization

Imagine you have 20 possible headlines for a page and want to optimize conversions. A traditional MAB test is winner takes all — whichever headline performed the best across all users would win. But what if younger users prefer headline C and older users prefer headline D? Your experiment doesn’t take age into account so the result would be suboptimal. If you thought age was really important, you could design a multivariate test up front, but that approach doesn’t scale well once you start adding many dimensions (country, traffic source, gender, etc.). Contextual Bandits address this limitation by optimizing over all possible permutations.

For many use cases, Contextual Bandits can give you the best possible conversion rates. But this comes with a cost. Unlike MAB or traditional A/B tests, you don’t end up with a single “winner” at the end of a Contextual Bandit experiment. You instead end up with complex rules like “young Safari visitors from Kentucky see headline B.” Besides being hard to interpret, imagine doing this on multiple parts of a page — you’ll quickly end up with thousands of possible permutations. This results in either much longer QA or a lot more hard-to-reproduce bugs. From my experience, this cost usually outweighs the benefits for most use cases.

Conclusion

Multi-arm Bandits are a powerful tool for exploration and hypothesis generation. It certainly has its place for sophisticated data-driven organizations. It is great for regret minimization, especially if running a short-running campaign. However, it is often abused or used for the wrong experiments. In real-world use, there are cases where MAB is not the best tool. Decisions involving user performance and data are nuanced and complicated, and giving an algorithm a single metric to optimize and removing yourself completely from the decision-making process is asking for trouble in most cases. Usually, hypothesis-based testing leads to superior results. You’ll end up with the ability to dig into the data to understand what is happening in each test variation, and use this to uncover new test ideas. Experimenting this way leads to cycles of testing that are the hallmark of successful A/B testing programs.

A/B Testing Platforms: Build vs Buy

As an engineer, I like to build things, but as a practical business matter, it’s usually less straight forward. As much as it pains me to say it, these days for A/B testing platforms it is usually more prudent to use a 3rd party platform. In this article, we’ll discuss the pros and cons of building vs buying A/B testing platforms.

Building

Building your own A/B testing platform has a few undeniable advantages. If you have an engineering team that has the ability to take this on, the tight coupling to your code and deployment systems can create a great developer experience. Tests can be deeply integrated into the code, unlocking some really interesting and complex experiments. This can bring the A/B testing deep into your product process, and can drastically speed up testing time. Coupled with a feature flag or similar deployment system, you can test almost every feature and update very easily. If you have an existing data warehouse, and if you’re considering building your own A/B testing platform, you almost certainly do, you can tie your system into these existing metrics. If you have an aggregate metric or a custom leading indicator of value to your users, you can fairly easily test against this directly. High-performance sites typically leverage a lot of caching, which can cause problems when A/B testing. A custom-built solution can account for many levels of caching without affecting performance too much.

On the other hand, building has its disadvantages. It will take some time to build a good, working system. At my previous workplace, we were continually improving our system. We managed to get a simple system working in about a month, but this had a terrible or nonexistent UI and many bugs and errors. Some of these bugs took months to find, too, which meant we had to throw out many tests. Like most software, we were never finished, but I’d estimate that the stable, months.

A/B testing is full of counterintuitive results, and if there is any doubt that the platform could have messed up the data or implementation, you can bet it will be blamed. Building trust is vital for a successful A/B testing program, so this is a major problem with home-built systems. You may have to contract with statisticians to make sure the math is correct, unless you have expertise in your data team.

Building a front-end testing system is quite hard to do, so most internally developed platforms will opt for only back-end implementations. This isn’t usually a problem except when inserting engineering as the bottleneck for launching tests — even simple ones.

Buying

Buying comes with some very obvious positives. Typically, you can be up and testing in a matter of hours from signing the contract. The statistics engines are proven, which means leadership is more likely to trust that the results are accurate. The ease of use of the platform can allow for greater participation in your testing program. Front-end tests can be implemented without any engineering help.

However, there are also many problems with existing 3rd party platforms. Usually, they use their own event tracking, meaning that it’s impossible to test against your existing metrics or data warehouse (GrowthBook, by the way, does let you do this). It also means yet another place you’re sending your user data, which might lead to you being out of compliance with the various privacy laws. The kinds of metrics you can test against are typically limited to, with most only supporting binomial events (yes/no, or clicked/didn’t click). Production-ready system took about 3 to 5 developers

If you open up the platform for many of your team to start tests, you can end up with interfering tests and make the results meaningless. You also risk running it like the Wild West, where the tests make no sense, are outside your design style guide, or lack strategic direction.

Front-end testing can cause pages to flicker as they load, as the payload is injected into the page. Flickering text or buttons can render results meaningless, as it either slows the page down or makes users more aware of flashing elements. Deeper code integration for back-end testing is harder to implement for a range of people, as it can be a round peg in a square hole. Often, the back-end and front-end analytics are not talking to each other.

The biggest impediment to buying, as with most things, is the cost. Many A/B testing platforms charge in a way that increases the more you test. Since you want to be testing a lot, this can mean that things get very expensive. Other solutions, like Google Optimize, are free as long as you stay within their usage limits, but once you go over, it’s $150k. It is also quite limited in terms of the tests you can run. It’s not uncommon for a medium-sized site to have the cost of the A/B testing platform be equivalent to one quarter to half of a full-time developer’s salary.

Conclusion

Build vs. buy can be a hard decision. A/B testing platforms have come a long way over the recent years, and are adding enough features that you should think really hard about building yourself. The costs of building and maintenance are probably much higher than you expect.

By the way, we built GrowthBook based on our experiences with exactly this decision — and it addresses all the negatives addressed above. We tie into your existing data infrastructure, are built to make a seamless developer experience, have a front-end test editor, and have tools to encourage a culture of experimentation. We have years of experience doing experimentation and building these tools. We offer all the advantages of building your own system, without the negatives of buying. Spend your time on products that add value for your users. Contact us and let us show how we do A/B testing better.

Why the Impact of A/B Testing Can Seem Invisible

The other day, I was speaking to a friend of mine who lamented that he didn’t really believe in A/B testing. He noticed over the years that his company has had plenty of successful test results, but hasn’t been able to see the tests' effects on the overall metrics they care about. He was expecting to see inflection points around the implementation of successful experiments. Quite often, companies run A/B tests that show positive results, and yet when overall metrics are examined, the impact of these tests is invisible. In this post, I’ll discuss the reasons this happens and ways to avoid or explain it.

Confusing Statistics

Statistics can be remarkably counterintuitive, and it's quite easy to reach the wrong conclusions about what the results mean. Each A/B testing tool uses a statistical engine for its results. Some use Frequentist, some use Bayesian, and others use more esoteric models. Often, they give you the confidence (P-Value) interval and the percent improvement right next to each other, making it very easy to believe that they are related. For instance, saying: “We are 95% confident that there is a 10% improvement,” versus “We saw 10% improvement, and there is a 95% chance that this test is better than the baseline.” With the second explanation, the test might actually have much smaller improvement, and there is even a 5% chance that it wasn’t an improvement at all.

Many A/B testing tools rely on you having successful tests, making you seem like a hero for the tests’ success, since they want you to keep paying them. They are not incentivized to do A/B testing properly and to be honest with the statistics. The end result is that it is very easy to misinterpret your results, and your tests may not be as successful as you think.

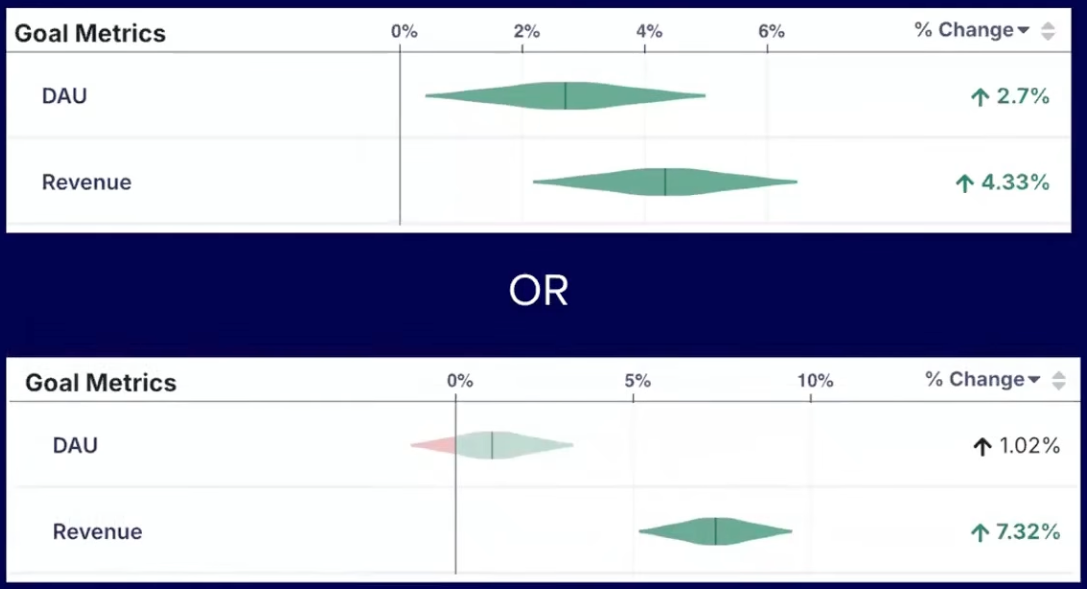

Solution: Use a tool with a statistical range

Use a tool that gives you a statistical range of likely outcomes rather than one value. This discussion gets far more complicated, and I’ll save the Frequentist vs Bayesian for another post. The short version: Bayesian approaches offer a probability range, giving you a much more intuitive way to understand the results of your experiment. If the improvements of a test are over-exaggerated, you will erode trust in the long-term results of A/B testing.

The Peeking Problem

The “peeking problem” is where the more you watch your tests for significance, the more likely it is that the test will show you an incorrect significant result. If you’re constantly watching the test until it “goes significant,” and then acting on it by stopping the test, you’re likely going to see the result of random chance, and not necessarily an actual improvement.

You have not captured meaning; you have only captured random noise. Instead of asking whether the difference is significant at a certain predetermined point in the future, you are asking whether the difference is significant at least once within the test. These are completely different questions. If you call this experiment by waiting for the test to reach significance, you may not see effects overall. You “peeked” and only saw random noise.

Solution: Predefine your sample size

There are two solutions. One is to estimate the sample size for the experiment, then stop the test when you reach that sample size and draw conclusions from that data. However, this has its own problems. The other options are to use Bayesian statistics, which has less of this problem because it always gives a probabilistic range; to use sequential analysis; or to apply a multiple-multiple testing correction each time the results are examined.

Micro Optimizations

One of our growth teams spent a lot of time and effort coming up with experiment ideas for a section of our site, which they correctly surmised could be significantly improved. What they failed to take into account was that the traffic to that section was very low. Even if they were extremely successful, the overall impact would be low.

Hypothetically, even if they increased the conversion rate by 10%, given that this section represented only 10% of the overall converting traffic, they would’ve increased revenue by only 1%, which is likely not noticeable. It is very easy to focus on that 10% win and celebrate it, while not acknowledging that the raw numbers are low.

Solution: Better prioritization

Be honest about your traffic numbers, estimate the possible impact, and sort by this. There are many ways to prioritize test ideas, each with its pros and cons, but it’s important to choose one.

You could also use a system like GrowthBook, which has these features built in, including our Impact Score. Make sure your team is aware of the current state of your traffic and conversion numbers (if that is your goal), so they can focus on high-impact sections of your product.

Real Impact Gets Lost in the Noise

In the previous section, we talked about a 1% win as being lost in the noise. But 1% improvements are great! If you can start stacking consistent small wins, this can have a real impact on your overall metrics — but you’re unlikely to see any noticeable changes on a top-level metric, particularly if you’re testing frequently.

Solution: Look for long-term trends

There are no great solutions for this. Make sure that people looking at the overall numbers are aware that it’s hard to see small signals amid the noise of larger metrics. It doesn’t mean your tests aren't having an effect. If possible, look for longer trends or ways to isolate the cumulative effects. Small changes can compound and have large effects.

Optimizing Non-Leading Indicators

At my previous company, we noticed that users who engaged with multiple content types converted and retained at higher rates than those who engaged with only one type. We used this engagement as a leading indicator for lifetime value. We then spent some time trying to get all users to engage with multiple types of content. We achieved this goal, but the actual conversion and revenue numbers decreased as a result (we pushed users into a pattern they didn’t want). We fell into the classic “correlation is not causation” problem, and our chosen proxy metric was meaningless.

Many companies want to optimize for metrics that are not directly measurable, or are measurable but require time frames that are impractical to test. In this situation, companies use proxy metrics as leading indicators for their actual goals. If you improve a proxy metric and fail to see movements in your goal metrics, it is likely that your assumptions about your proxy metrics are wrong.

Solution: Measure against your actual goals

Measure and test against the goals you’re trying to achieve directly if you can. If your goal is revenue, you may need to use a platform like GrowthBook, which supports many more metric types than just binomial. If you have to use a proxy metric, quickly test the assumptions you made and make sure they’re causally linked, not just correlated.

Not enough tests

Successful A/B tests are typically between 10% to 30%. The chances that any one of these is a big win are even lower. If you’re running 2 tests a month, 24 tests a year, you may have about 5 of them succeed on average. If 10% of those are very successful, you may not have any big wins in a year. This can be the downfall of growth teams and experimentation programs. If you’ve been running a program for a while and you’re not seeing the results, it could be that you’re not running enough tests.

Solution: Test more

Test more. If you find your odds of success low, and you’re able to test more often, your chances of a successful result go up. Just make sure you have an honest way to prioritize your tests and focus on high-impact areas.

Trust is a critical part of successful A/B testing programs, and a great way to earn trust is to show results. Be honest with your statistics, prioritize well, and test as much as you can. Hopefully, by implementing, or at least being aware of, the problems and solutions above, you can make your experimentation program more successful and see its impact on overall metrics.

Why A/B Testing Is Good for Your Company Culture

Six years ago, my previous company had a problem. We had the habit of building large, time-intensive projects based on perceived market trends or tenuous research, and far too often, these projects failed to grow our business. We also had far too many meetings debating small details like the placement of buttons or which colors to use, and some of them even got contentious. Agile delivery counteracts a lot of these problems, but without the right systems and tools in place, we still ran into issues that slowed down progress to our goals. The solution we came up with evolved slowly, but looking back, there were a few simple changes to our process and some new tools that had a remarkable impact on accelerating our growth. As a bonus, we noticed it had positive effects on our company culture as well.

The first step we took was to get our data in order. We evaluated many BI (Business Intelligence) and analytics tools and ultimately decided that none of these tools gave us a deep enough understanding of our users. We opted instead to create a data wahouse where we could run our own queries and analytics, and then created reports using data visualization tools (which, by the way, are orders of magnitude cheaper than the BI tools). This had the additional bonus of having the data in the right shape (roughly) as we grew our data team.

The second step was to add an AB testing platform. At the time, there weren’t AB testing platforms on the market that we were happy with, so we built our own. Building it in-house meant that the AB testing could be easily and deeply integrated into the code, a huge advantage.

Finally, we changed our product process to incorporate a lot of testing. For each new project or iteration, teams were required to create a valid hypothesis about why it would be successful, and determine specific success metrics (for example, increasing retention or engagement rates). Then their hypothesis was tested against the control with our AB testing framework to see which won. After a few cycles of this, we were able to drastically increase the cadence of our testing.

Lessons learned

Becoming data-driven had remarkable and wide-ranging effects on our product process and company culture. Let’s go over the top four effects.

Alignment

Adopting the steps above allowed us, as a company, to focus on the metrics that would drive our business success. Having these clearly defined goals removed a lot of ambiguity about projects because we had clear success metrics. By making sure we defined our success metrics at the start of our planning cycle, we could drive alignment around the goals. This helped reduce the inevitable scope creep and pet features from inserting themselves — or at least gave us the framework to say “yes, but not now.” Knowing what success meant also allowed our developers to start integrating the tracking needed to know whether the project would be successful from the beginning, which was too often forgotten and done only as an afterthought. We also got in the habit of building the reports and dashboard in parallel to see what the current behavior was (if any), so they would be ready for tracking the projects’ effects and sharing results with the company.

Speed

The meetings where we reviewed designs or implementations became much faster to get through, as we would often say, “Let’s test it.” What tended to happen was that people let their opinions or biases affect their decisions. The biggest problem with these was that most of the time, we were not our users; we were essentially guessing what our users would prefer — and this was an opinion. When there was a disagreement between two opinions, it was very easy to take it personally or defer to the HiPPOs (Highest Paid Person’s Opinion). By focusing on which metrics defined success, we could remove the ego from the decision process. Whenever we found ourselves with an intractable difference of opinions, the answer became “let’s AB test it.” This allowed us to move on, increased the speed of development, and shortened the time between iterations. When properly implemented, AB testing should be straightforward and add minimal project overhead.

Intellectual humility

If there is one thing I’ve learned from our AB testing, it’s that most people are really bad at predicting user behaviors — myself included. For example, as an education company, we had to be sure that our members were over 13 to legally register. We had quite a number of ideas on how to do this, from small checkboxes on the user form to a full-screen statement, “You must be over 13 to continue.” When it came to AB testing these ideas, I felt certain that the minimal treatment would win. After all, the accepted wisdom is that one should reduce friction on the user forms by simplifying them as much as possible. However, the winner was the full-screen version, and by a lot! AB testing is nothing if not humbling. This was great news for our company as this attitude was incorporated into our culture — good ideas can come from anywhere, and no one person in our company is the arbiter of what is “right” and “wrong.” The HiPPO’s ideas are just as likely to be right or wrong as anyone else’s ideas.

Team collaboration

Initially, we had a growth team coming up with all the test ideas. However, since the odds of successful AB tests were (and are) low, having just one team come up with all the test ideas fundamentally limited our chances of big wins. We were aiming to run as many potentially high-impact tests as we could. We found that inviting ideas from the entire company opened up ideas we hadn’t considered, and in turn, led to some really good results. As a bonus, we also improved the culture of inclusion by inviting everyone to participate in the process.

In closing, moving to a data-driven process that focused on hypothesis-based AB testing had a remarkable impact on our growth and culture. We were able to choose the metrics we wanted to move and focus the teams on moving them. The results of these projects were directly measurable. We experienced some remarkable single tests that increased our revenue by ~20%. The excitement of these wins was palpable. The teams started executing faster and had greater alignment and collaboration, which was good for the company and great for our users.

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics—free.