What To Do When an A/B Test Doesn't Win

A/B test with no significant results isn't a failed experiment—it's the most common outcome in the industry.

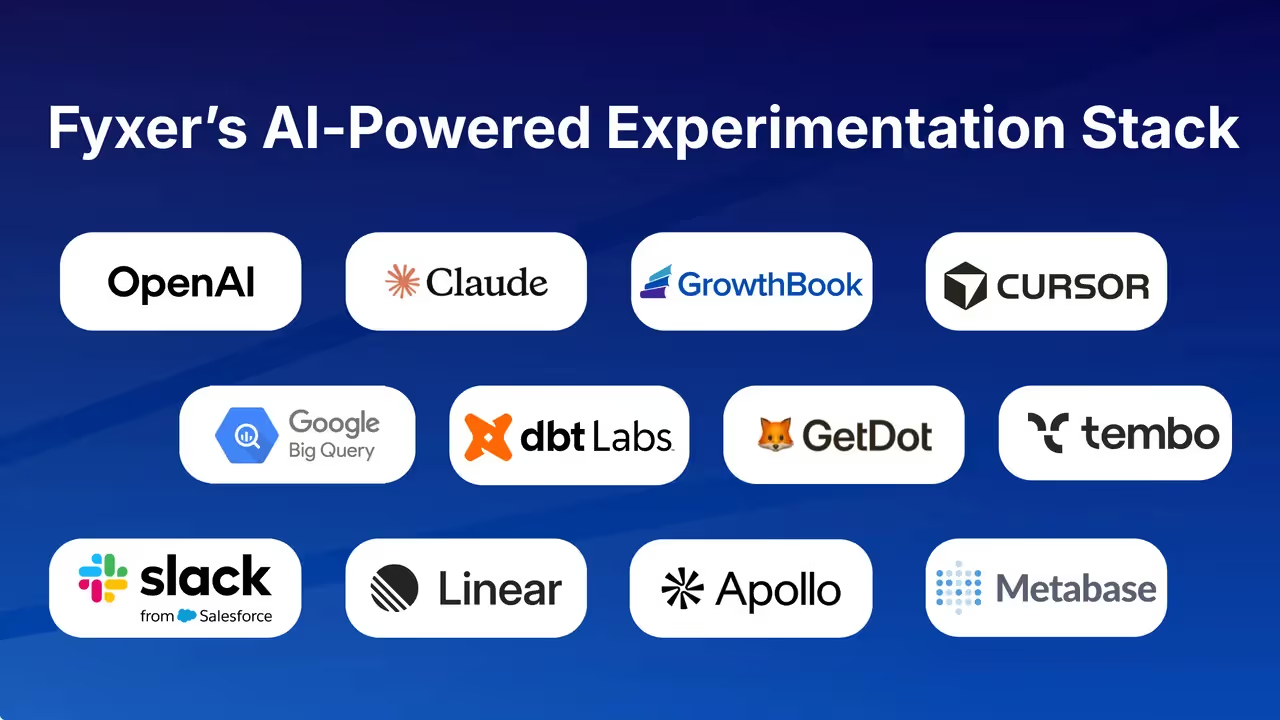

According to GrowthBook's experimentation data, only about one-third of tests produce a clear winner. Another third show no effect, and the final third actually hurt the metrics they were designed to improve. That means a flat result isn't a sign something went wrong. It's the expected output of a well-run experiment.

The problem isn't the result. It's what most teams do next—which is usually nothing useful. This guide is for engineers, PMs, and data teams who want to stop treating null results as dead ends and start treating them as diagnostic inputs.

Whether you're new to experimentation or just tired of watching flat tests get filed away and forgotten, here's what you'll learn:

- How to read a confidence interval to tell the difference between "we don't know yet" and "we genuinely found nothing"

- How to diagnose whether your test was underpowered before you blame the hypothesis

- How to turn a non-significant result into a sharper next experiment

- How to make a defensible shipping decision when the data doesn't hand you a winner

The article moves in that order—from reframing what a null result actually means, to diagnosing what went wrong, to extracting forward value, to making the call on what to do with the feature.

Each section is practical and skips the theory that doesn't change what you do on Monday.

Most A/B tests don't win—and that's exactly how it should work

You ran the test, waited for statistical significance, and got... nothing. No winner, no loser, just a confidence interval straddling zero and a result that feels like wasted time.

That frustration is real, especially when there's organizational pressure to ship something, justify the engineering investment, or show that your experimentation program is producing value.

Here's the reframe: your test didn't fail. It performed exactly as the statistics predict it should.

The industry benchmark: one-third win, one-third flat, one-third hurt

According to GrowthBook's experimentation documentation, the industry-wide average success rate for A/B tests is approximately 33%. The distribution breaks down roughly into thirds: one-third of experiments successfully improve the metrics they were designed to improve, one-third show no measurable effect, and one-third actually hurt those metrics.

Read that again. A null result isn't an outlier—it's tied for the single most common individual outcome. If you ran a hundred experiments and a third of them came back flat, you'd be tracking exactly with the industry baseline. The test that "went nowhere" is, statistically speaking, the expected result.

There's an additional nuance worth noting: the more optimized your product already is, the lower your win rate tends to be. Teams working on mature, heavily iterated products should expect to see win rates that fall below 33%, not above it.

A declining win rate in a maturing experimentation program is often a sign of health, not dysfunction—it means you've already shipped the obvious improvements and are now testing genuinely uncertain hypotheses.

Why the math means most tests shouldn't win

If every test your team ran produced a statistically significant winner, that would actually be a warning sign. It would suggest you're only testing changes you already know will work—low-risk, high-confidence tweaks that don't require a controlled experiment to validate. A high win rate can indicate a poorly calibrated experimentation program, not a successful one.

The statistical machinery of A/B testing is deliberately conservative. It's designed to require substantial evidence before declaring a winner, which means it will correctly return inconclusive results when the true effect is small, absent, or too subtle to detect at your current sample size. That conservatism is a feature. It's what prevents you from shipping changes that appear to work but don't.

It's worth acknowledging that not all null results are identical. Some are genuine—the change had no real effect on user behavior. Others are Type II errors, or false negatives, where a real effect exists but the test wasn't powered to detect it.

That distinction matters and deserves its own diagnostic process, which we'll cover in the next section. For now, the key point is that both categories are normal and expected outputs of a well-functioning experiment.

Reframing the scoreboard: 66% of tests still produce the right decision

Here's the reframe that makes this actionable when you're talking to stakeholders: the 33/33/33 split doesn't mean you're only winning a third of the time. It means you're making the clearly correct decision roughly two-thirds of the time.

Shipping a feature that won is a win. Not shipping a feature that would have hurt your metrics is also a win—arguably a more important one, because the downside of shipping a bad change is often larger than the upside of shipping a good one.

As GrowthBook's documentation puts it: "Failing fast through experimentation is success in terms of loss avoidance, as you are not shipping products that are hurting your metrics of interest."

That's not a consolation prize framing. It's an accurate description of what experimentation programs are actually for. The goal isn't to generate wins—it's to make better decisions under uncertainty. A null result that prevents a harmful rollout is the system working exactly as designed.

Mature experimentation teams track win rate as a program health metric, not just a success metric. Experimentation platforms with win rate tracking built into their analytics dashboards include this specifically to help teams calibrate whether they're taking the right mix of bold bets versus incremental changes—because the expectation is that win rates will be low and variable, and that's a normal operating condition, not a problem to be solved.

Before you call it inconclusive, check what the confidence interval is actually telling you

A p-value above 0.05 or a "probability of winner" below 95% tells you one thing: the test didn't cross your significance threshold. It doesn't tell you why.

That distinction matters enormously, because two tests can both fail to reach significance while telling completely different stories about what actually happened. The confidence interval is what separates those stories—and most practitioners never read it carefully enough to notice.

What a confidence interval actually measures

The plain-language version: a confidence interval is the range of effect sizes your data is consistent with. If your variant's CI on conversion rate lift runs from -3% to +8%, your data can't distinguish between a small negative effect, no effect, and a moderately positive one.

The key practical rule is simple—if the CI includes zero, you cannot rule out that the true effect is zero. But that's not the same as proving the effect is zero, and conflating those two conclusions is where most post-test analysis goes wrong.

GrowthBook surfaces confidence intervals as a first-class output in both its frequentist and Bayesian engines (where the Bayesian equivalent is called a credible interval). Either way, the same interpretive logic applies: the position and width of that interval are telling you something specific, and you should read both before drawing any conclusion.

The wide interval: you don't have enough data yet

A wide confidence interval straddling zero is the signature of an underpowered test. The interval is wide precisely because there isn't enough data to narrow down where the true effect lies. The test hasn't found no effect—it hasn't found anything with precision.

A real example from the practitioner community illustrates this well: a team ran a test with only 24 visitors in one group and 29 in another. Their testing tool reported "96% confidence." A manual p-value calculation returned p = 0.0706—not significant.

The tool's output was misleading because the sample was so small that the underlying confidence interval was wide and unreliable, even if the tool didn't surface that directly. The root issue wasn't the math—it was that the test never had enough data to say anything meaningful.

This is the scenario where "inconclusive" genuinely means "we don't know yet." Running the test longer—or redesigning it with a proper sample size calculation—is the right next step. Abandoning the hypothesis based on this result would be premature.

The narrow interval: you looked hard and found nothing

A narrow confidence interval centered near zero is a fundamentally different signal. It means the test did accumulate enough precision to detect a meaningful effect—and didn't find one. The true effect is likely small. This is the scenario where "inconclusive" actually means "genuinely flat."

Here, running the test longer is unlikely to change the conclusion. More data will narrow the interval further, but if it's already tight around zero and excluding effect sizes that would actually matter to your business, you have your answer.

The correct next move is to interrogate the hypothesis—was the change large enough to plausibly shift behavior in the first place?—not to extend the runtime hoping significance eventually appears.

The decision rule: width relative to what you actually care about

The practical question isn't "is this CI wide or narrow in absolute terms?" It's "does this CI exclude effects that would be meaningful to the business?" That's where the concept of a Minimum Detectable Effect becomes the interpretive anchor.

GrowthBook's power analysis is built around this directly—interval halfwidth is a literal variable in the platform's power formula, which means CI width and statistical power are the same problem expressed two different ways.

So before you label a result inconclusive and move on, ask two questions. First: does the CI span a range that includes both practically meaningful positive and negative effects? If yes, you're likely looking at an underpowered test, and the diagnosis belongs in sample size and test design, not in the hypothesis itself.

Second: is the CI narrow and centered near zero, ruling out effects you'd actually care about? If yes, you have a genuinely null result, and the right response is to interrogate the hypothesis and make a shipping decision—not to keep the test running.

These two scenarios demand different responses. Treating them the same is how teams waste months re-running tests that already answered their question, or abandon hypotheses that were never actually tested with enough rigor to know.

Underpowered tests are the most common reason you're not seeing significance

When a test comes back without a significant result, the instinct is to conclude the hypothesis was wrong. Most of the time, that conclusion is premature. A large share of null results in A/B testing aren't evidence that the change had no effect—they're Type II errors, false negatives produced by a test that was structurally incapable of detecting the effect it was designed to find.

The hypothesis didn't fail. The experiment design did.

That's a meaningful distinction, because a design problem is fixable.

What statistical power actually means in practice

Power is the probability that your test will detect a real effect if one actually exists. GrowthBook's documentation puts it plainly: power is "the probability of rejecting the null hypothesis of no effect, given that a nonzero effect exists."

The industry convention is to design experiments for 80% power. That number sounds reassuring until you flip it around: even a well-designed test has a one-in-five chance of missing a real effect. Run an underpowered test, and that miss rate climbs considerably higher.

Power comes down to three things working together: how many users you tested, how big the real effect actually is, and how noisy your metric is day-to-day. More users, a bigger effect, or a less volatile metric all push power up. Fewer users, a smaller effect, or a metric that swings around a lot all push it down.

One lever that gets overlooked: you can increase power without adding a single user, by reducing how much your metric fluctuates. CUPED—a technique that uses data from before the experiment started to filter out background noise—does exactly this.

GrowthBook's own documentation is unusually direct about it: "If in practice you use CUPED, your power will be higher. Use CUPED!"

Warehouse-native experiments have a particular advantage here—pre-experiment covariate data is already accessible in the warehouse, making CUPED implementation more straightforward than in instrumentation-first setups.

The peeking problem

The most common way tests get corrupted isn't malicious—it's impatience. Peeking means checking your results during a test and making a stopping decision based on what you see, whether that's stopping early because significance appeared or extending the test because it hasn't.

This matters because the statistical framework underlying a fixed-horizon test assumes the sample size was determined in advance and the data was examined once. Every time you check interim results and let that influence when you stop, you inflate your false positive rate and invalidate the power calculation the test was built on.

GrowthBook's experimentation documentation explicitly categorizes this as p-hacking—"manipulating or analyzing data in various ways until a statistically significant result is achieved."

A test stopped at 60% of its required sample size wasn't just unlucky. It was never capable of detecting the effect it was designed to find.

MDE miscalculation

The Minimum Detectable Effect is the smallest effect size for which your test achieves 80% nominal power. It's the threshold your experiment is built around—and it's where most power problems originate.

The typical mistake is optimism. A team expects a 20% lift because that's what would make the project worth it, so they set their MDE at 20% and calculate a sample size accordingly. But if the true effect of the change is 4%, that test is dramatically underpowered.

It will return a null result even if the treatment genuinely works—not because the idea was wrong, but because the test was never designed to see an effect that small.

The right question when setting an MDE isn't "what lift do we hope to see?" It's "what is the smallest effect that would actually change our decision?" That answer is usually smaller and less flattering than the aspirational number, and it implies a larger required sample size. That's the correct tradeoff to make explicitly, not discover after the fact.

Retroactive power diagnostic

If you've already run a test that came back flat, you can still assess whether the null result was a power problem. Work through these questions against your completed experiment: How many observations did you actually collect versus how many were required for 80% power at your stated MDE?

Consider whether you stopped based on interim results rather than a predetermined endpoint. Examine whether your MDE was grounded in historical lift data or was aspirational. Check whether variance reduction techniques were applied that could have increased power. And confirm whether the test ran long enough to cover a full business cycle, avoiding day-of-week bias and novelty effects.

If your actual sample fell short of the required sample—particularly below roughly 80% of what was needed—the null result is more likely a power failure than a hypothesis failure.

GrowthBook's experimentation platform supports both prospective and retrospective power calculations, including adjustments for sequential testing, which produces a conservative lower-bound power estimate. Running this calculation after a flat test takes minutes and can save a team from abandoning a hypothesis that deserved a better-designed experiment.

A null result is only wasted if you don't interrogate it

A null result only becomes wasted effort if your team treats it as a dead end. The data is still there. The user behavior still happened. The question is whether you're willing to interrogate the result with enough structure to extract something forward-looking from it—or whether you're going to file it under "didn't work" and move on.

Mature experimentation programs do the former. Here's the framework.

Audit the hypothesis and KPI before blaming the data

The most common upstream failure in A/B testing isn't a math problem—it's a logic problem. Either the hypothesis lacked a behavioral mechanism, or the metric you measured wasn't actually connected to the outcome you cared about.

On the hypothesis side, ask yourself honestly: why would this change cause users to behave differently? A hypothesis like "swapping the shade of blue on a CTA button" fails this test. There's no behavioral theory behind it.

Contrast that with something like "adding a testimonial near the conversion point will increase sign-ups because social proof reduces purchase anxiety"—that's a hypothesis with a mechanism. When a null result comes back on a mechanism-free hypothesis, the test didn't fail; the hypothesis was never sound.

On the KPI side, the problem is proxy metrics that feel measurable but don't connect to real outcomes. Click-through rate as a proxy for activation, or time-on-page as a proxy for engagement, can both look fine while the metric that actually matters—conversion, retention, revenue—goes nowhere.

If your primary metric didn't move, ask whether it was the right primary metric to begin with. GrowthBook's ability to add metrics retroactively to a completed experiment is useful here: you can look back at what the test did move, even if the pre-specified metric didn't budge, and use that to sharpen the next hypothesis.

Check whether the change was large enough to plausibly shift behavior

A null result on a small change is not evidence that the underlying idea is wrong. It may simply mean the implementation wasn't bold enough to produce a detectable signal. As one practitioner put it plainly: "If the difference between your A and B versions doesn't meaningfully impact user behavior, you won't see a meaningful result—no matter how perfect your math is."

If your test involved a minor copy tweak or a subtle visual adjustment, the honest follow-on question isn't "does this idea work?" It's "what version of this change would be large enough to actually move behavior?"

Sometimes the right next test is a more extreme variant of the same idea—a full page redesign instead of a headline swap, or a completely rewritten value proposition instead of a single word change.

Mine segment-level data for differential effects

Aggregate null results can hide meaningful effects in subgroups. Breaking down results by new versus returning users, device type, acquisition channel, or geography often reveals that a change worked well for one segment and poorly for another—effects that canceled each other out in the aggregate.

Dynamic Yield puts it directly: "Flat and negative results are valuable: They expose audience segments and guide further tests." GrowthBook's documentation makes a similar point about novelty effects—new users and returning users often respond differently to interface changes because returning users carry expectations shaped by prior experience. That new-versus-returning cut alone is worth running on most null results.

One important caveat: post-hoc segment analysis on a null result is hypothesis generation, not confirmation. Running multiple segment cuts increases the probability of finding a spurious signal by chance.

Any segment-level finding from this analysis needs to be validated in a follow-up test designed specifically for that segment—not shipped based on the exploratory cut alone.

Document the learning and feed it back into the hypothesis queue

Individual null results are low-value in isolation. In aggregate, they're a compounding organizational asset—if you capture them properly. A shared experiment repository with structured fields (hypothesis tested, primary KPI, result, segment observations, recommended next test) prevents teams from re-testing the same dead ends and reveals patterns across experiments over time.

A structured experiment repository built around exactly this problem surfaces "the experiments that worked well, and the ideas that didn't" so future work can build on the full history rather than starting from scratch.

The compounding effect is real. Experimentation programs generate a lot of artifacts that are easy to lose. The teams that build institutional memory from their null results are the ones that get sharper hypotheses over time—not because they're smarter, but because they're not repeating themselves.

What to actually do with the feature when the test doesn't decide for you

At some point, every experimentation team hits the same wall: the test ran, the data came in, and nothing was significant. Not harmful, not helpful—just flat. The statistical machinery did its job, and the answer it returned was "we don't know."

But the feature still exists. The code is merged. Someone's waiting on a decision. So what do you actually do?

The answer is more tractable than it feels in the moment, but it requires accepting that "the test didn't decide" is itself a valid outcome that still demands a human call.

Default to the lower-cost option

When a test is genuinely inconclusive—you've already ruled out underpowering and measurement error—the shipping decision should hinge on cost, not on the absence of a winner. The logic is straightforward: a null result is not evidence of harm, it's evidence of neutrality.

Given that, the decision rule becomes: ship if the feature is already built, adds no meaningful complexity, and shows no negative signal in the confidence interval. Revert if the change introduces technical debt, increases maintenance burden, or adds friction to a critical workflow.

The cost of the change—not the absence of statistical significance—is what should tip the balance.

This framing matters because it removes the false premise that a non-significant result leaves you with nothing to stand on. It doesn't. "Proves it does no harm" is a legitimate and sufficient standard for shipping. Not shipping something harmful is a real outcome with real value—which is exactly why the 33/33/33 benchmark reframes the scoreboard in the first section of this article.

One practical note: if your team is running experiments behind feature flags with instant rollback capability, the cost of reverting approaches zero. That changes the calculus.

When you can flip a flag in seconds without a new build, the "revert if complex" path becomes even lower-friction, which means you can afford to be more conservative about shipping changes that show no lift.

The political and risk-management function nobody talks about openly

There's a dimension to A/B testing that practitioners understand intuitively but rarely say out loud: tests aren't just statistical tools. They're also political ones.

A Hacker News commenter with obvious operational experience put it bluntly: "A/B testing is a political technology that allows teams to move forward with changes to core, vital services of a site or app. By putting a new change behind an A/B test, the team technically derisks the change, by allowing it to be undone rapidly, and politically derisks the change, by tying its deployment to rigorous testing that proves it at least does no harm to the existing process before applying it to all users."

This isn't cynicism—it's an accurate description of how mature organizations use experimentation. The test already did its job in an inconclusive outcome. It derisked the change. It gave the team a defensible basis for whatever decision comes next.

The three ways a new feature typically fails—errors, bugs that affect metrics, or no bugs but negative business impact—are all caught by the test, even when no winner emerges. That's the de-risking function working as designed.

Recognizing this dual role doesn't undermine the statistical integrity of your program. It just means you're operating with a complete picture of what A/B tests are actually for.

The stakeholder conversation: reframing inconclusive as a defensible decision

The hardest part of an inconclusive test is often not the decision itself—it's the conversation with stakeholders who expected a winner. Here's the reframe that holds up under pressure: A/B tests help teams make the clearly right decision roughly 66% of the time. Either you ship something that won, or you avoid shipping something that lost. Both outcomes are wins. An inconclusive result that prevents a bad ship is not a failure; it's the system working.

When stakeholders push back, be direct about the decision logic: "The test showed no harm and no significant lift. We're [shipping/reverting] based on [complexity/cost] considerations, and we've documented the learnings for the next iteration." That's a complete, defensible answer.

One thing worth flagging to leadership: optimizing for win rate as a program KPI creates perverse incentives. Teams that are measured on how often their tests "win" will stop running high-risk, high-reward experiments and gravitate toward safe, incremental changes that are more likely to show significance.

GrowthBook's documentation explicitly flags this as a Goodhart's Law problem. If your stakeholders are demanding winners, they may inadvertently be discouraging the experiments most likely to generate real impact.

When the test comes back flat: power first, interval second, decision third

A flat result isn't a verdict on your idea. It's a prompt to ask three questions in order: Was the test designed well enough to detect a real effect? Did the confidence interval tell you "we don't know yet" or "we looked hard and found nothing"? And given that answer, what's the lowest-cost path forward?

That sequence—power first, interval second, decision third—is the actual workflow behind every well-run experimentation program. The article you just read is built around it.

The diagnostic checklist: power, measurement, and hypothesis quality

Before you blame the hypothesis, check the experiment design. If your sample fell short of what was required for 80% power at a realistic MDE, or if you stopped early based on an interim peek, the null result is a design artifact—not an answer.

Only after you've ruled out underpowering does it make sense to interrogate whether the hypothesis had a behavioral mechanism behind it and whether the primary metric was actually connected to the outcome you cared about.

The decision matrix: when to rerun, when to ship, and when to move on

A wide confidence interval straddling zero points to one response: rerun with a proper sample size, because the test never had the precision to answer the question. When the interval is narrow and centered near zero—excluding effects that would matter to the business—you have your answer: make the shipping call based on cost and complexity rather than the absence of a winner.

Segment-level signals that survived the aggregate represent a third scenario entirely, and they belong in a follow-up test designed for that segment, not in a shipping decision based on the exploratory cut. These three scenarios are distinct, and collapsing them into a single "inconclusive" bucket is how teams waste months going in circles.

Null results compound—but only if you capture them

The compounding value of an experimentation program isn't in the wins—it's in the institutional memory. A null result that gets documented with a clear hypothesis, a clean power assessment, and a recommended next test is worth more to your team six months from now than a win that gets shipped and forgotten.

A structured experiment repository exists precisely because that memory is easy to lose and hard to rebuild. The teams that get sharper over time aren't running more tests—they're learning more from each one.

This article is meant to be genuinely useful the next time you're staring at a flat result and trying to figure out what to do with it. If it helped you see that result differently, that's the point.

What to do next: Pull up the last test your team called inconclusive. Check the actual sample size against what was required for 80% power at a realistic MDE. If it fell short, the hypothesis isn't dead—the design was. If the power was adequate and the confidence interval was narrow around zero, the result is real: interrogate the hypothesis and make a cost-based shipping decision. If the power was adequate and the confidence interval was wide—not narrow—around zero, that's the third path: the test ran long enough but still couldn't narrow down the effect. That's a signal to redesign the experiment with a larger required sample, a more sensitive metric, or variance reduction applied before you rerun. Document the required sample size for the next attempt and attach it to the experiment record before you close it. Either way, document what you found and attach a recommended next test before you close the experiment. That single habit, applied consistently, is what separates programs that compound from programs that s

Related Articles

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics—free.