What Are Confidence Levels in Statistical Analysis

Picking 95% as your confidence level because that's what everyone does is the statistical equivalent of copying someone else's homework — you get an answer, but you don't know if it fits your situation.

For engineers, PMs, and data teams running A/B tests, confidence levels in statistics aren't just a number to plug in before hitting "run." They're a decision about how much risk of being wrong you're willing to accept, and that decision should change based on what you're testing and what it costs if you're wrong.

This article is for anyone who ships experiments and wants to stop treating confidence levels as a formality. Here's what you'll learn:

- What a confidence level actually means — and why the most common interpretation is wrong

- Why 95% became the default and why that history should make you skeptical of it

- How to match your confidence threshold to the stakes of the decision you're making

- How confidence level, sample size, statistical power, and minimum detectable effect are all connected

- When frequentist confidence intervals fall short — and what Bayesian approaches offer instead

We'll move through each of these in order, starting with the foundational misconception that causes the most downstream damage. By the end, you'll have a clear framework for treating confidence level selection as the risk tolerance decision it actually is — not a math problem with one right answer.

What a confidence level actually tells you (and what it doesn't)

Most people who work with A/B test results have read a confidence interval and thought something like: "We got a 95% confidence interval of [2.1%, 4.3%], so there's a 95% chance the true effect is in that range." That interpretation feels intuitive.

It is also wrong — and the gap between what confidence levels in statistics actually mean and how teams use them in practice is wide enough to drive real decisions off a cliff.

The misconception: "95% chance the true value is in this interval"

The most common misreading of a confidence interval treats it as a probability statement about a single, already-calculated result. Once you've run your experiment and computed the interval, the thinking goes, there's a 95% probability the true parameter sits inside it.

But that's not what the number means. As Statsig's documentation puts it directly: "It's important to note that this doesn't mean there's a 95% chance the current interval contains the true parameter; rather, it's about the long-term frequency of capturing the true parameter across repeated experiments."

Here's why the intuitive reading breaks down: once you've calculated a specific interval from your data, the true parameter is a fixed value. It either falls inside your interval or it doesn't. There's no probability left to assign — the outcome is already determined, even if you don't know what it is.

Saying there's a "95% chance" the true value is in a specific interval you've already computed is like flipping a coin, covering it, and saying there's a 50% chance it's heads. The coin has already landed.

The correct interpretation: a property of the method, not the result

The 95% refers to the procedure that generated the interval, not to any particular interval it produces. Wikipedia's explanation of the frequentist approach sees the true population mean as a fixed unknown constant, while the confidence interval is calculated using data from a random sample. Because the sample is random, the interval endpoints are random variables."

The interval moves. The parameter doesn't. That's the key.

A useful way to hold this: imagine casting a fishing net 100 times into a lake where a fish is sitting in a fixed location. A 95% confidence level means your net is designed so that 95 out of 100 casts will capture the fish. But on any single cast, you either caught it or you didn't — and you can't calculate the probability of that specific cast after the fact.

The 95% describes how reliable your net is across many uses, not how likely it is that this particular cast succeeded.

A simpler way to see this: imagine your experiment produced a confidence interval so wide it covered every plausible effect from -50% to +50%. You'd technically have a "95% confidence interval" — but it would tell you nothing useful. The 95% describes the reliability of your method, not the quality of any particular result it produces.

The frequentist framework cannot answer the question stakeholders are actually asking

If your team reads a confidence interval as "95% chance we're right about this specific experiment," you will systematically overstate certainty in individual readouts. That overconfidence compounds when results get communicated to stakeholders who will make irreversible decisions — a pricing change, a feature rollout, a deprecation — based on a single experiment's output.

There's also a structural problem the frequentist framework can't solve: it cannot answer the question most decision-makers actually want answered. "What is the probability this variant is better?" is not a question a frequentist confidence interval can address. The framework doesn't permit probability statements about fixed parameters.

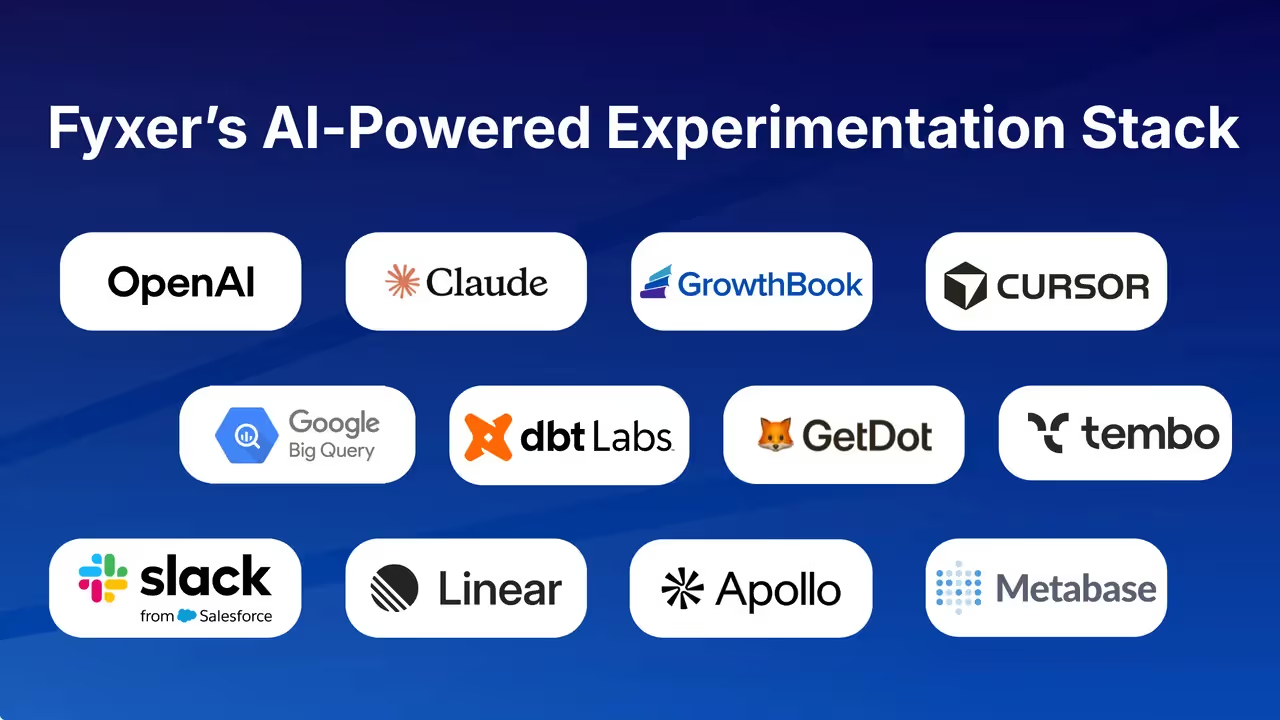

This is precisely why some experimentation platforms have moved toward Bayesian outputs. GrowthBook, for example, defaults to Bayesian statistics because they "provide a more intuitive framework for decision making for most customers," and surfaces a metric called "Chance to Win" — defined simply as the probability that a variation is better.

That's the question stakeholders are actually asking. A frequentist confidence interval, correctly interpreted, cannot give them that answer. Understanding why is what makes the rest of the decisions around confidence levels — what threshold to use, when to deviate from 95%, how to communicate uncertainty — possible to reason about clearly.

Why 95% became the default confidence level — and why that's a problem

There is a version of this conversation where 95% is a perfectly defensible starting point. It's a reasonable middle ground between being too credulous and too skeptical, it's been used long enough that results are comparable across studies, and it maps cleanly to a 1-in-20 false positive rate that most people can intuitively grasp. That version of the argument exists, and it deserves to be taken seriously before being challenged.

But here's what that argument doesn't say: that 95% was derived from your business context, your risk tolerance, or any principled analysis of what a false positive costs your team. It wasn't. It's a historical artifact, and treating it as a universal law is a form of intellectual laziness with real organizational consequences.

Where the 95% threshold actually came from

The 95% confidence interval has been the standard since nearly the beginning of modern statistics — almost a century ago. It emerged from scientific research contexts where experiments were expensive, replication was slow, and false positives carried serious reputational costs.

The threshold was designed for a world where being wrong meant publishing a bad paper, not shipping a slightly suboptimal button color.

What's striking is that even its defenders acknowledge the threshold is arbitrary. Tim Chan, Head of Data at Statsig, writes that 95% is "deemed arbitrary (absolutely true)" — a remarkable concession from someone arguing in favor of the default. The arbitrariness isn't a bug that was later fixed. It's baked in. The number was a pragmatic convention that calcified into a rule.

This matters because the 95% threshold corresponds to α = 0.05: a 1-in-20 chance of a false positive under the null hypothesis. That rate was chosen for scientific publishing, not for product teams running dozens of experiments a quarter. When you apply it unchanged to a completely different decision environment, you're not being rigorous — you're borrowing someone else's risk tolerance without checking whether it fits.

The same threshold fails in both directions

One of the more honest observations about the 95% default is that it's simultaneously criticized as too conservative and too permissive — depending on who you ask and what they're testing. That's not a sign of a well-calibrated standard. It's a sign that the standard is context-blind.

For a team testing a high-stakes pricing change, 95% may be dangerously permissive. A 1-in-20 false positive rate means that if you run 20 such experiments, you should statistically expect to make one major pricing decision based on noise. For a team iterating rapidly on low-stakes UI changes, 95% may be needlessly conservative — causing them to discard real improvements because they didn't hit an arbitrary threshold designed for peer-reviewed science.

The prior plausibility of the hypothesis compounds this problem. A 95% threshold applied to a well-supported, theoretically grounded hypothesis is doing different work than the same threshold applied to a speculative, counterintuitive claim. The number looks the same on the output, but the implied reliability of the result is not. Binary threshold thinking obscures this entirely.

The organizational risk of defaulting to convention

When teams treat 95% as a rule rather than a choice, they stop asking the question that actually matters: what is the cost of a false positive in this specific decision? That question has a different answer for every experiment, and the answer should drive the threshold — not the other way around.

The practical consequence is that organizations end up with a false positive rate that nobody consciously chose. GrowthBook's experimentation platform tracks win rates and experiment frequency across an organization — a capability that only makes sense if false positive accumulation is a recognized operational risk. If 95% were always the right threshold, there would be no need to monitor whether your win rate looks suspiciously high.

The 95% default isn't wrong in the way that a calculation error is wrong. It's wrong the way a borrowed assumption is wrong — it might fit, but you haven't checked. The right response isn't to abandon the threshold; it's to own the choice. Decide what a false positive costs you, decide how often you can afford to be wrong, and set your confidence level from there. That's not a statistics problem. It's a decision problem.

Confidence levels in statistics are a risk tolerance decision, not a math problem

Most teams treat confidence level selection as a formality — you pick 95% because that's what everyone picks, run the experiment, and check whether the result crosses the threshold. But that framing gets the decision exactly backwards.

Choosing a confidence level is not a statistical ritual. It's a business decision about how much risk of being wrong your team is willing to accept, and the right answer depends entirely on what you're deciding and what it costs if you're wrong.

What a false positive actually costs you

A false positive — what statisticians call a Type I error — happens when your experiment tells you a variant won, but the effect wasn't real. The result crossed your threshold by chance, not because the change actually works. At 95% confidence, you're accepting a 5% probability of this happening on any given test. At 90%, you're accepting 10%.

That 5% sounds small until you think about it at scale. A team running 20 experiments per year at 95% confidence should statistically expect one of those "wins" to be a false positive — even if every experiment is designed and executed perfectly. That's not a theoretical edge case. That's one bad decision per year baked into your process by design.

The question is not whether false positives will happen. They will. The question is what they cost when they do.

Not all false positives are equal

A false positive on a button color test is nearly harmless. You ship a change that doesn't actually move the needle, you notice nothing improved, and you move on. The cost is a few engineering hours and a missed opportunity.

A false positive on a pricing change is a different category of problem entirely. You restructure how you charge customers based on experiment results that were noise. Revenue shifts. User trust erodes. By the time you realize the effect wasn't real, the decision may be difficult or impossible to reverse cleanly.

Breeze Airways, working with GrowthBook, explicitly framed their experimentation program around avoiding exactly this kind of outcome — what they described as "do no harm" testing, designed to prevent costly mistakes from shipping based on false signals. The financial exposure from acting on a false positive in a high-stakes context isn't abstract; it's measurable.

The asymmetry is straightforward: the cost of a false positive scales with the irreversibility and financial magnitude of the decision. Your confidence threshold should scale with it too.

Matching confidence level to decision reversibility

The practical implication is that a single default threshold is the wrong tool for a team making decisions of varying stakes. A UI copy change and a subscription pricing overhaul should not be held to the same standard of evidence, because the consequences of being wrong are not remotely comparable.

Low-cost, easily reversible decisions — color tests, copy variations, minor layout changes — can tolerate lower confidence thresholds. If you're wrong, you revert. The cost of a false negative (missing a real improvement) may actually be higher than the cost of a false positive in these cases, which means being overly conservative has its own price.

The right confidence level depends on your research goals, sample size, and tolerance for risk — not on convention. Industry practitioners similarly treat the choice between 90% and 95% as explicitly contextual, not universal.

High-stakes, hard-to-reverse decisions warrant the opposite approach. When the decision is difficult to unwind and the financial or user experience impact is significant, 95% or higher is justified — not because 95% is magic, but because the cost of the 5% error rate is now large enough to matter.

Reversibility and cost of error should drive your threshold, not convention

Before defaulting to 95%, ask two questions: How easily can this decision be reversed? And what is the realistic cost if the effect turns out to be noise?

High reversibility combined with low impact makes a lower threshold defensible. Low reversibility combined with high impact demands a higher one. Medium-stakes decisions land somewhere in the middle — 95% is a reasonable starting point, but only if you've explicitly acknowledged what that 5% error rate means in practice for your specific decision.

One important caveat: raising your confidence threshold is not free. Requiring stronger evidence means you need more data to reach a conclusion, which means longer runtimes or larger sample sizes. Adjusting the threshold without adjusting the experiment design doesn't make your results more reliable — it just makes them more inconclusive. That tradeoff is worth understanding before you reach for a higher number as a default form of caution.

How confidence levels interact with sample size, statistical power, and false positives

Confidence level is not a standalone dial you can turn up to get more certainty out of an experiment. It is mechanically entangled with statistical power, sample size, and minimum detectable effect — and adjusting one without recalibrating the others doesn't make your experiment more rigorous. It just breaks it in a different direction.

Teams running A/B tests tend to encounter two recurring failure modes: results that never reach significance, and results that declare winners too easily. Both get misdiagnosed as bad luck or insufficient traffic. The actual cause, more often, is a misconfigured relationship between these four variables.

Confidence level and sample size are not independent

When you raise your confidence threshold — say, from 95% to 99% — you are lowering the acceptable false positive rate. That sounds like a straightforward improvement. But statistical power is defined as the probability that a test will detect a real effect of a given size with a given number of users.

If you tighten your significance threshold without increasing your sample size or extending your experiment runtime, you haven't gained certainty. You've just made it harder to reach a conclusion at all.

Think of it as a balancing act. Certainty doesn't appear from nowhere — it has to be earned through data. A higher confidence threshold demands more evidence before declaring a result significant. If you don't collect more data to supply that evidence, you're running a test that will return inconclusive results far more often — not because nothing is happening, but because you've raised the bar without giving the experiment the resources to clear it.

What underpowered experiments look like in practice

A Type II error occurs when the data appears inconclusive even though a real effect exists — and these errors typically force teams to either collect more data or make a decision without sufficient evidence. Neither outcome is good.

This failure mode is more common than most teams realize. Industry data suggests that only about one-third of experiments produce genuine improvements, one-third show no effect, and one-third actually hurt the metrics they were intended to improve. If your team is seeing a win rate significantly lower than that already-modest baseline, underpowering is a plausible explanation. Real effects are going undetected because the experiments weren't designed to find them.

MDE: the variable that ties everything together

Minimum detectable effect (MDE) is the smallest difference between control and treatment that a test can reliably detect, given a specific combination of significance threshold, power, and sample size. All four variables are linked. Change one, and MDE shifts.

The practical implication is direct: if the true effect of your change is smaller than your MDE, the experiment cannot detect it — even if the effect is real. This is how teams end up with "inconclusive" results on experiments that are actually working. As GrowthBook's documentation states plainly: "If the expected effect size is smaller than the MDE, then the test may not be able to detect a significant difference between the groups, even if one exists."

Before running an experiment, the right question to ask is: what's the smallest effect that would actually be worth acting on? Once you have that number, you can work backward to determine whether your planned sample size and confidence level can realistically detect it. If they can't, you're not running a valid experiment — you're generating noise with extra steps.

The Type I / Type II tradeoff you can't avoid

Tightening your confidence threshold reduces false positives (Type I errors) but increases false negatives (Type II errors) when sample size stays fixed. You cannot minimize both simultaneously without collecting more data. This is a hard constraint, not a configuration problem.

The tradeoff compounds when teams run many experiments at once. A concrete illustration of this: 10 experiments, each with 2 variations and 10 metrics, produces 100 simultaneous statistical tests. Even if none of those experiments has any real effect, the sheer volume of tests makes false positives nearly inevitable.

Aggressively controlling for this — using statistical corrections that raise the bar for each individual test when many tests are run simultaneously — can completely undermine test power, pushing the problem in the opposite direction.

The practical resolution is to match your correction strategy to your context. Exploratory analysis, where you're generating hypotheses rather than confirming them, warrants a false discovery rate approach that tolerates some false positives in exchange for sensitivity. High-stakes confirmatory decisions warrant stricter error rate control, accepting that you'll miss some real effects to avoid acting on noise. Neither approach is universally correct. The choice depends on what kind of mistake is more costly — which brings the question back to risk tolerance, not statistics.

When frequentist confidence intervals aren't enough: the case for probabilistic thinking

There's a question every product manager asks after an A/B test wraps up: "What's the probability this variant is actually better?" It's a reasonable question. It's also one that a frequentist confidence interval structurally cannot answer — not because the math is wrong, but because it's answering a different question entirely.

What frequentist confidence intervals can and cannot tell you

When a stakeholder looks at your results and asks "so there's a 95% chance the new design is better?", the honest frequentist answer is: that's not what this number means. The interval doesn't tell you the probability the variant is better. It tells you something about the reliability of your estimation procedure across hypothetical repeated experiments — a concept that is genuinely difficult to communicate in a Monday morning product review.

Practitioners in the statistics community have been wrestling with this mismatch for decades. A recurring theme in discussions among working statisticians is that frequentist outputs aren't wrong — they're just routinely misapplied by non-statisticians who read them as probability statements.

The framework was built for a research context where the goal is controlling long-run error rates, not for a product context where the goal is making a decision about a specific variant right now.

How Bayesian credible intervals reframe the question

Bayesian credible interval means there is a 95% probability, given your data and your prior beliefs about likely effect sizes, that the true parameter falls within that range. That's the statement product teams want.

More directly: the Bayesian framework allows statements like "there's a 73% chance this new button produces a positive effect" — a direct probability statement about a specific outcome that a frequentist interval cannot produce. As GrowthBook's statistics documentation notes explicitly, "there is no direct analog in a frequentist framework" for that kind of statement.

One concept worth understanding briefly: Bayesian analysis starts with a baseline assumption about how large effects typically are before the experiment runs. Think of it as a starting belief that gets updated as data comes in. With a large enough sample, this starting belief becomes irrelevant — the data overwhelms it. With a small sample, it matters more. Most experimentation platforms set this starting belief to "neutral" by default, meaning: we're not assuming anything about what effects look like until your data tells us.

Practical Bayesian outputs in experimentation platforms

The gap between what frequentist intervals communicate and what decision-makers need has pushed several experimentation platforms toward Bayesian-first defaults. GrowthBook, for example, defaults to Bayesian statistics specifically because, in their words, it provides "a more intuitive framework for decision making."

The primary output is Chance to Win — a direct probability that a given variation is better than the control. The typical decision threshold is 95%, meaning you wait until there's a 95% probability the variant is genuinely an improvement (or 5% if you're watching for harm). This is the number a product manager can actually act on without needing to translate statistical jargon into a business decision.

GrowthBook also surfaces relative uplift as a full probability distribution rather than a fixed interval. As their documentation puts it, this "tends to lead to more accurate interpretations" — instead of reading a result as simply "it's 17% better," teams naturally factor in the uncertainty ("it's about 17% better, but there's a lot of uncertainty still").

Most modern experimentation platforms support both Bayesian and frequentist engines, and the right choice depends on your team's statistical fluency, your organization's existing conventions, and how you need to communicate results to stakeholders.

Choosing a confidence level is a risk tolerance decision that belongs to each experiment

The preceding sections have built toward a single practical conclusion: confidence level selection is not a statistical formality. It's a risk management decision that should be made deliberately, per experiment, based on what you're testing and what it costs to be wrong. Here's how to put that into practice.

Match your confidence threshold to the stakes and reversibility of the decision

Before setting a threshold, characterize the decision you're making. Ask two questions: How easily can this change be reversed if the result turns out to be noise? And what is the realistic financial or user experience cost of acting on a false positive?

Low-stakes, easily reversible changes — UI copy, button colors, minor layout adjustments — can tolerate 90% confidence or lower. The cost of a false positive is minimal, and being overly conservative means missing real improvements. High-stakes, hard-to-reverse decisions — pricing changes, major onboarding flows, subscription model restructuring — warrant 95% or higher. The cost of acting on noise in these contexts is large enough that the stricter threshold is justified.

Medium-stakes decisions fall in between. Use 95% as a starting point, but explicitly acknowledge what the 5% error rate means for that specific decision rather than treating the threshold as a default you never questioned.

Audit your experiment setup: sample size, power, and MDE before you set a threshold

Choosing a confidence level in isolation is incomplete. Before running any experiment, verify that your planned sample size and runtime can actually detect the smallest effect worth acting on at your chosen threshold.

Work through these checks before launch:

- Define your minimum detectable effect — the smallest improvement that would actually change your decision

- Confirm your sample size is sufficient to detect that effect at your chosen confidence level and power target (typically 80%)

- If the numbers don't work, either extend the runtime, reduce the confidence threshold, or accept that the experiment cannot answer the question you're asking

Raising your confidence threshold without adjusting sample size doesn't make your results more reliable. It makes them more inconclusive. The rigor has to come from the experiment design, not just the threshold.

Consider Bayesian metrics alongside frequentist confidence levels for clearer decisions

If your team regularly struggles to communicate experiment results to non-technical stakeholders, or if you find yourself in situations where "statistically significant" doesn't translate cleanly into a ship/no-ship decision, Bayesian outputs are worth evaluating alongside your frequentist confidence intervals.

Chance to Win gives decision-makers a direct probability they can act on. Credible intervals answer the question stakeholders are actually asking. Neither replaces rigorous experiment design — but they do reduce the translation layer between statistical output and business decision.

Start with your last three experiment results. For each one, ask whether the confidence level you used matched the reversibility and cost of that specific decision. If it didn't — if you used 95% on a low-stakes UI test or 90% on a pricing change — you now have the framework to recalibrate. Confidence levels in statistics are a choice. Make it deliberately.

Related Articles

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics—free.