P-Value Best Practices for A/B Testing

A green dashboard and a p-value below 0.05 feel like permission to ship.

A green dashboard and a p-value below 0.05 feel like permission to ship. For a lot of teams, that's where the analysis stops — and that's exactly where the problems start. The p-value is one of the most useful tools in A/B testing, but it's also one of the most misread. Used without the right context, it doesn't just fail to protect you from bad decisions — it actively enables them.

This guide is for engineers, PMs, and analysts who run A/B tests and want to stop making decisions on a foundation they haven't fully inspected. Whether you're new to experimentation or you've been reading dashboards for years, the failure modes covered here are common enough to affect most teams. Here's what you'll learn:

- What a p-value actually measures — and the four things it cannot tell you

- The most dangerous misconceptions, including why p < 0.05 is not a shipping decision

- How peeking at results and p-hacking silently inflate your false positive rate

- Why tracking many metrics at once breaks your significance threshold

- How to build a complete decision framework where the p-value is one input, not the verdict

The article moves in that order — from the correct definition, through the most common failure modes, and into the practical framework that makes p-value best practices actually stick in a real product environment.

What a p-value actually measures (and what it doesn't)

Statistical significance is "the most misunderstood and misused statistical tool in internet marketing, conversion optimization, and user testing" — and that's not a fringe opinion. It's the assessment of practitioners who work with these numbers daily.

If you're shipping features based on p-values without a precise understanding of what they actually represent, you're making decisions on a foundation you haven't fully inspected. Before getting into the failure modes, it's worth building that foundation correctly.

The formal definition — what the number actually represents

The cleanest version of the definition comes from statistician Andrew Vickers: "the probability that the data would be at least as extreme as those observed, if the null hypothesis were true."

Read that slowly, because the conditional clause at the end is doing most of the work. A p-value is not a measure of how likely your result is to be correct. It is not the probability that your variant actually outperforms control. It is a conditional probability — one that assumes no real effect exists and then asks: given that assumption, how surprising is the data we collected?

A concrete illustration helps. Imagine you're checking whether a child brushed their teeth. You find their toothbrush is dry. The p-value is the probability of finding a dry toothbrush if the child had actually brushed. A low probability doesn't prove the child didn't brush — but it does make that claim harder to sustain. The data is inconsistent with the null. That's all the p-value tells you.

In an A/B testing context, GrowthBook's documentation frames it this way: the p-value is the probability of observing a difference as extreme or more extreme than your actual measured difference, given there is actually no difference between groups. A p-value below your significance threshold means the observed result would be unusual in a world where your variant had no effect — not that the variant definitely works.

The null hypothesis — the assumption the p-value is built on

Every p-value is computed against a null hypothesis. In A/B testing, that null hypothesis is typically the assumption that variant B produces no different outcome than variant A — that any observed difference is attributable to chance.

When a p-value falls below a predetermined alpha level (commonly 0.05), you reject the null hypothesis. But rejecting the null is not the same as proving the alternative. It means the data is sufficiently inconsistent with a world where no effect exists. That's a meaningful signal, but it's a narrower claim than most dashboards imply.

The alpha threshold is your pre-agreed tolerance for being wrong in a specific way: concluding that your variant works when it actually doesn't. Set alpha at 0.05 and you're saying: "I'm willing to accept a 5% chance of a false alarm." That tolerance is fixed before the test runs — not adjusted based on what the data shows. This matters because peeking and multiple testing, covered later, both work by effectively running the test multiple times, which erodes the 5% guarantee you thought you had.

What a p-value cannot tell you

This is where most practitioners go wrong, and the errors have real business consequences.

A p-value cannot tell you the probability that the null hypothesis is true. This is the most common inversion: reading p = 0.03 as "there's only a 3% chance this result is a fluke." That's not what it means. It means that if there were no effect, you'd see data this extreme only 3% of the time. The conditional runs in one direction only.

Nor does it tell you whether the effect is large enough to matter. A p-value of 0.001 on a 0.01% conversion lift is statistically significant. It is almost certainly not worth shipping. Effect size and confidence intervals are required to answer the question of practical significance — and as GrowthBook's documentation explicitly notes, "p-value alone cannot determine the importance or practical significance of the findings."

Causal inference is equally outside its scope. In a properly randomized A/B test, causal claims come from the experimental design — the randomization itself. The p-value is a statement about the data under an assumption, not a causal claim.

And replication is not something it can predict. It describes this dataset under this null hypothesis. It makes no forward-looking guarantee about what you'd observe if you ran the experiment again.

These are not edge cases or academic caveats. They are the exact misreadings that lead teams to ship features that don't move the needle, or to kill variants that actually work. Getting the definition right is the first step to avoiding them.

The most dangerous misconceptions about p-values in A/B testing

The scenario plays out constantly across product teams: a variant shows a 15% conversion lift with p < 0.05 after two days of running. The dashboard turns green. Someone ships it to 100% of users. Three weeks later, conversions are flat.

What went wrong wasn't the math — it was the interpretation. P-values are precise instruments that become dangerous when misread, and the misreadings that cause the most damage aren't exotic edge cases. They're the default assumptions baked into how most teams use experimentation dashboards.

The p < 0.05 threshold is not a shipping decision

The most pervasive misconception is treating p < 0.05 as a binary pass/fail gate — a green light that certifies a result as real and worth acting on. It isn't. The 0.05 threshold is a pre-agreed tolerance for false positives, chosen by convention in the early twentieth century and inherited by modern software without much scrutiny. Crossing it means the data would be unlikely if there were truly no effect — it does not mean the effect is real, stable, or large enough to matter.

When teams treat the threshold as a shipping decision, they're outsourcing judgment to a single number that was never designed to carry that weight. The result is exactly what the opening scenario describes: a "significant" result that evaporates in production, because the test was underpowered, run too briefly, or simply caught a random fluctuation that cleared the bar.

Statistical significance is not business significance

These two concepts are orthogonal, and conflating them is where resources get misallocated at scale. A p-value of 0.001 tells you the observed data would be extremely rare under the null hypothesis. It tells you nothing about whether the underlying effect is large enough to justify shipping, staffing, or continued investment.

Consider a hypothetical: a test reaches p < 0.001 on a 0.01% conversion lift. That result is statistically significant. It is also commercially irrelevant — the lift is too small to move revenue in any meaningful way, and the engineering cost to maintain the variant almost certainly exceeds the return. GrowthBook's own documentation states this directly: "p-value alone cannot determine the importance or practical significance of the findings." The business question — does this effect matter enough to act on? — requires a separate answer that the p-value cannot provide.

Effect size and confidence intervals are required co-factors, not optional context

A p-value stripped of effect size is an incomplete measurement. It tells you the probability of the data given the null; it says nothing about how big the effect actually is. Two experiments can return identical p-values while one shows a 2% lift and the other shows a 0.1% lift — and those are not equivalent results for any product decision.

Confidence intervals are the corrective. A narrow confidence interval around a meaningful effect size is a strong signal. A wide confidence interval that barely excludes zero is a weak one, even if the p-value clears the threshold. Effect size, sample size, and study design are required co-factors for interpreting any result — not optional context to review if you have time.

Without pre-registration, any pattern in the data can be rationalized as the intended finding

Analysts without formal research training often skip pre-registration entirely — not out of bad faith, but because they don't know it's a norm, or they treat it as bureaucratic overhead that slows down shipping velocity. The consequence is subtle but serious: without a pre-registered hypothesis, any pattern that emerges in the data can be rationalized as the intended finding after the fact.

GrowthBook's documentation puts it plainly: "If you analyze the results of a test without a clear hypothesis or before setting up the experiment, you may be susceptible to finding patterns that are purely due to random variation." That susceptibility compounds over time. As Ron Kohavi's work on experimentation at scale has documented, the downstream effect is trust erosion — confidence in individual test results drops first, then confidence in the experimentation program itself weakens, making it harder to defend, fund, and scale.

Pre-registration is the structural safeguard against this failure mode. It forces the team to commit to what they're measuring, why, and what threshold constitutes a meaningful result — before the data exists to rationalize any particular answer.

How peeking at results and p-hacking inflate false positives in A/B tests

Most teams running A/B tests are not running the statistical process they think they are. They set up a test, watch the dashboard, stop early when results look promising, and explore different metrics until something crosses the significance threshold. Each of these behaviors feels like good product instinct. Statistically, each one is quietly destroying the validity of the test.

Peeking substitutes a different statistical process for the one your p-value was built for

Peeking is the practice of checking test results before the predetermined sample size or run duration has been reached — and then making decisions based on what you see. It's nearly universal. Dashboards are built to be checked. Stakeholders ask for updates. Engineers want to know if the new feature is working. The organizational pressure to peek is constant.

The problem is mechanical, not philosophical. Standard statistical tests — t-tests, chi-squared tests, Fisher exact — are designed around a fixed process: determine your sample size in advance, run the experiment, check the result once. Peeking substitutes a fundamentally different process for that one. The p-value your dashboard displays was calibrated for the fixed process. When you peek, you're reading a gauge that was built for a different machine.

The mechanics of false positive inflation

The damage peeking does is not subtle. A simulation illustrates the scale: with a 20% baseline conversion rate and a significance threshold of p < 0.10, checking results after every new sample (with a minimum of 200 samples) produces a combined false positive rate of 55% after 2,000 samples. That's more than five times the expected false positive rate of 10%. The threshold you set means almost nothing.

The compounding logic is straightforward: every additional look at accumulating data is effectively an additional hypothesis test. Each check carries its own probability of producing a spurious significant result, and those probabilities accumulate. As GrowthBook's documentation on experimentation problems puts it, "the more often the experiment is looked at, or 'peeked', the higher the false positive rates will be, meaning that the results are more likely to be significant by chance alone." The guarantee that your alpha level provides — that you'll see a false positive only 5% of the time — only holds if you look once, at the end, as planned.

What p-hacking looks like in practice

P-hacking is the broader pattern of which peeking is one instance. GrowthBook's documentation defines it as what happens when analysts "explore different metrics, time periods, or subgroups until they find a statistically significant difference" — and critically, frames it as something that happens "either consciously or unconsciously." That framing matters. P-hacking is not primarily a story about bad actors manipulating data. It's a story about well-intentioned analysts working in environments that reward shipping.

The process mismatch is the same as with peeking: you calculate a p-value as if you tested one hypothesis, but you actually tested fifteen — different conversion metrics, different user segments, different time windows — and reported the one that crossed the threshold. The p-value was never designed for that process.

Practitioner communities have noted this is endemic in tech. A 2018 Hacker News discussion of an SSRN paper on p-hacking in A/B testing surfaced commentary suggesting the majority of winning A/B test results in industry may be illusory — with one commenter saying they'd "be shocked if it were as low as 57%." The organizational dynamics that enable this are well-documented: analysts without formal experimental science training, fast-moving environments that reward speed over rigor, and a promotion cycle where the person who shipped the "winning" test is often long gone before the false positive surfaces.

Pre-registration as the primary defense

The three concrete defenses against peeking and p-hacking are pre-registering the hypothesis before the test begins, fixing your alpha level before the test, and committing to a predetermined run duration without deviation based on observed results. GrowthBook's documentation is direct on this: "it's important to use a predetermined sample size or duration for the experiment and stick to the plan without making any changes based on the observed results."

For teams that realistically cannot commit to a single look — because stakeholders demand continuous visibility, or because a badly broken variant needs to be caught early — sequential testing and Bayesian methods with custom priors are structural alternatives designed to account for multiple looks rather than prohibit them. Sequential testing is available as a native framework in modern experimentation platforms for this reason.

It's worth noting that Bayesian approaches are not a complete escape hatch. Bayesian statistics can also suffer from peeking depending on how decisions are made on the basis of Bayesian results. The method matters less than the discipline of deciding in advance what decision rule you'll follow and holding to it.

Why tracking many metrics simultaneously breaks your p-value threshold

Most product teams instrument their A/B tests with a dashboard full of metrics — conversion rate, revenue per user, session length, engagement depth, retention at day 7, and a dozen more. This feels like rigor. It isn't. When you test multiple metrics simultaneously against the same α = 0.05 threshold, you're not accepting a 5% false positive rate. You're accepting something far worse, and the math makes that uncomfortably concrete.

Twenty metrics, one experiment: how false positives compound at scale

If you track 20 independent metrics in a single experiment, the probability that at least one of them produces a spurious significant result by chance alone is approximately 64% — even when your treatment has no real effect on anything. Each individual test carries its own 5% false positive risk, and those risks compound across the full set of metrics you're evaluating.

Scale this to a real experimentation program and the problem becomes acute. Consider a team running 10 concurrent experiments, each with two variations and 10 metrics. That's 100 simultaneous hypothesis tests. Even with zero true effects anywhere in the system, that volume of testing will generate false positives at a rate that makes your significance threshold essentially decorative.

One important caveat: the 64% figure assumes metric independence, which is rarely true in digital products. Page views correlate with funnel starts. Registration events correlate with purchase events. When metrics are correlated, the theoretical worst case doesn't fully apply — but the false positive inflation remains real and material. The independence assumption failing in your favor doesn't make the problem go away.

Controlling the family-wise error rate when a single false positive has real consequences

The Family-Wise Error Rate (FWER) is the probability that at least one test in your analysis produces a false positive. Controlling FWER means you're holding the line on that probability across the entire family of tests — not just for each individual metric in isolation.

The standard implementation is Holm-Bonferroni, which adjusts p-values based on how many tests you're running simultaneously. It improves on the simpler Bonferroni method, which multiplies each p-value by the total test count — a correction so severe that with 20 metrics, your effective threshold drops from p < 0.05 to p < 0.0025. At that level, you'd need a much larger sample to detect real effects, which in practice means many true improvements get missed. Holm-Bonferroni achieves the same protection against false positives while being less likely to hide real ones.

FWER control is the right choice when a single false positive has real consequences — when you're making a high-stakes shipping decision and one spurious significant result could send you in the wrong direction. The trade-off is reduced power: you'll need larger sample sizes or longer run times to detect true effects at the same rate.

When exploratory analysis calls for tolerating some false positives: the FDR approach

FWER control asks: "What's the chance that even one of my significant results is wrong?" FDR asks a different question: "Of all the results I'm calling significant, what fraction are probably wrong?" If you run 20 tests and FDR is controlled at 5%, you might get 20 significant results — but you'd expect only about one of those to be a false positive. That's a more permissive standard, which means you'll catch more real effects. The trade-off is that you're knowingly accepting some noise in your findings.

The Benjamini-Hochberg procedure is the standard implementation. It's less strict than FWER control, which means it preserves more statistical power — you're more likely to detect true effects, at the cost of accepting that some fraction of your significant findings will be noise.

This makes Benjamini-Hochberg the more appropriate choice for exploratory analysis, where you're scanning broadly for signals and can tolerate some false positives in exchange for not missing real ones. For confirmatory tests where you're deciding whether to ship, FWER control is the more defensible standard.

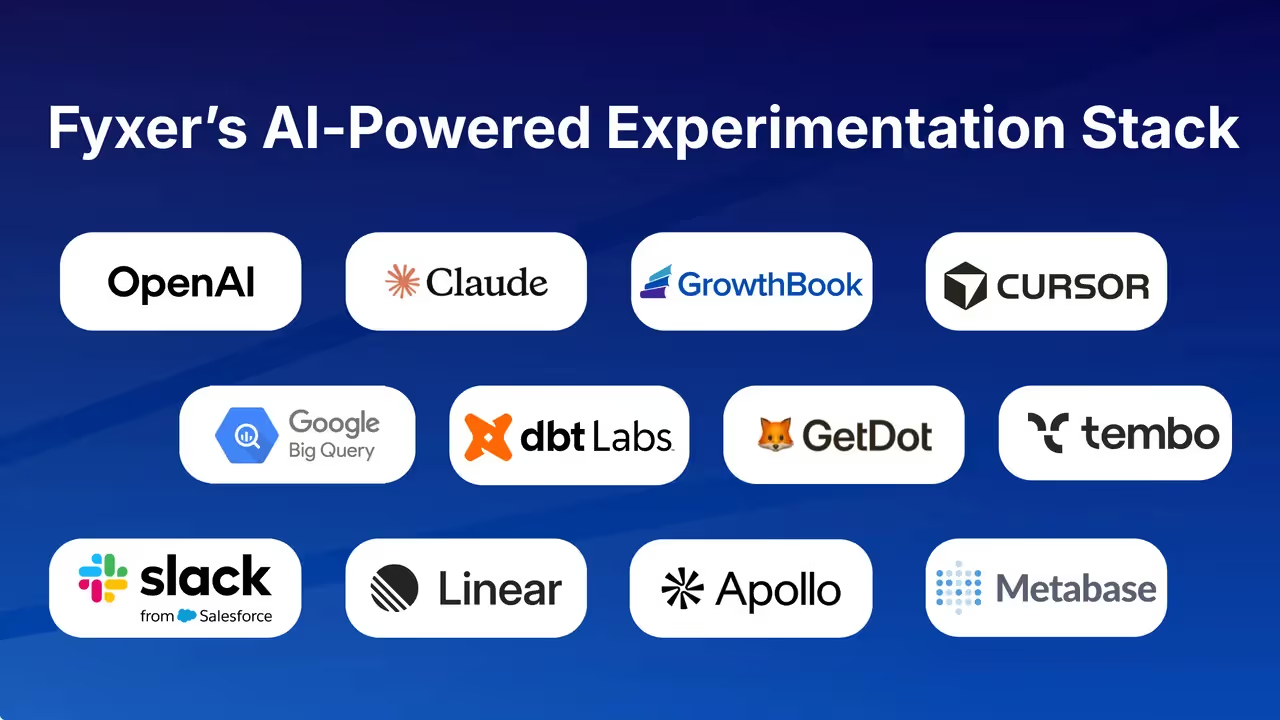

Platforms like GrowthBook implement both corrections natively in their frequentist engine, letting teams configure the correction method at the organization level so it applies consistently across experiments rather than requiring analysts to adjust p-values manually.

The Texas sharpshooter fallacy

There's a cognitive bias that describes what multiple testing looks like from the inside, and naming it makes it easier to catch in practice. The Texas Sharpshooter Fallacy comes from the image of a marksman firing at a barn, then painting a target around whichever cluster of bullet holes looks most like a grouping. The shooting didn't produce accuracy — the target selection did.

In A/B testing, this is what happens when analysts examine results across many metrics or subgroups without pre-committing to which ones matter, then report the ones that reached significance. It doesn't require bad intent. It happens naturally when a team is under pressure to show results and the data contains enough metrics that something will always look promising.

The defense is procedural: pre-register which metrics you're testing and what significance thresholds you'll apply before the experiment runs. If a metric wasn't in the pre-registered analysis plan, any significant result it produces is exploratory at best — a hypothesis for the next test, not a basis for a shipping decision.

P-values are necessary but not sufficient: building a complete A/B testing decision framework

A p-value answers one narrow question: is this result unlikely to have occurred by chance, assuming the null hypothesis is true? That's a useful question. It's not, however, the question your shipping decision depends on. As one practitioner put it in a widely-shared discussion on experimental design, "What gets people are incorrect procedures" — not the mathematics itself. The p-value is sound. The framework around it is where teams go wrong.

Why a significant p-value is not a shipping decision

A p-value below your threshold tells you the observed difference is unlikely under the null. It does not tell you whether the effect is large enough to matter commercially, whether your confidence interval is tight enough to trust, or whether the lift justifies the engineering cost of shipping. Think of the p-value as a gate, not a verdict. Clearing that gate means you've ruled out one specific explanation for your results — pure chance under the null. It doesn't mean the variant should ship. That decision requires additional inputs that a single probability value structurally cannot provide.

Confidence intervals reveal what the p-value conceals: the size and plausibility of the effect

Confidence intervals communicate something the p-value cannot: the range of plausible effect sizes. A narrow confidence interval around a small effect is more informative than a significant p-value alone, because it tells you both that an effect likely exists and approximately how large it is. When teams skip this step, they routinely conflate random variation with genuine effects — a pattern that leads to post-ship disappointment when real-world results don't match the experiment.

This is where the "winner's curse" becomes relevant. Underpowered tests that happen to reach significance tend to overestimate effect sizes. The result looks compelling in the dashboard, the team ships, and the lift fails to materialize at scale. Calculating required sample size before the test runs — and pairing the resulting p-value with an effect size estimate — substantially reduces this risk. A p-value of 0.001 on a 0.01% conversion lift clears the statistical bar. It fails the business bar. Only effect size makes that distinction visible.

Fixed-horizon testing was not designed for dashboards that update continuously

Fixed-horizon testing was designed for contexts where researchers don't monitor accumulating data — agriculture trials, clinical studies with predetermined endpoints. Applied to digital experimentation, where dashboards update continuously and business pressure to decide is constant, this creates a structural mismatch.

For teams that cannot realistically commit to a single look at the end of a predetermined run, sequential testing is the structurally appropriate alternative. It is designed to allow valid inference at multiple points during data collection, rather than treating each peek as a violation of the test's assumptions. Modern experimentation platforms increasingly support all three statistical frameworks — frequentist, Bayesian, and sequential — along with variance reduction techniques like CUPED, reflecting the practical reality that no single framework is optimal across all testing contexts.

Bayesian methods offer a different path: rather than asking whether the data is inconsistent with a null hypothesis, they ask how the data should update your prior beliefs about the effect. This framing is more natural for continuous monitoring, but it requires discipline in how decisions are made — the peeking problem doesn't disappear just because the framework changed. The method matters less than committing in advance to a decision rule and holding to it.

The p-value is a gate, not a verdict: what the full decision requires

A complete decision framework for p-value best practices in A/B testing treats the p-value as one input among several, not as the final word. When a test reaches your predetermined significance threshold, the full decision checklist looks like this:

- Is the p-value below the pre-registered alpha threshold? If no, the result is not statistically significant — treat any observed difference as noise and do not ship based on it.

- Is the effect size large enough to matter commercially? A significant p-value on a negligible lift is not a shipping decision.

- Does the confidence interval exclude zero with enough margin to trust? A wide interval that barely clears zero is a weak signal even when significant.

- Was the hypothesis pre-registered before the test ran? If not, the result is exploratory — it generates a hypothesis for the next test, not a basis for action.

- Was the sample size predetermined and reached before analysis? If the test was stopped early based on observed results, the p-value is not valid at face value.

- Were multiple testing corrections applied if more than one metric was evaluated? If not, the significance threshold was effectively lower than stated.

When results are ambiguous — p-values near the threshold, wide confidence intervals, or underpowered tests — the correct response is to run a follow-up test with a pre-registered hypothesis and adequate sample size, not to rationalize a shipping decision from the current data.

P-value best practices for A/B testing: a checklist for getting it right

The failure modes covered in this article are not theoretical. They are the default behaviors of most experimentation programs operating without explicit process guardrails. The checklist below is organized around the two moments where p-value best practices are most commonly violated: before the test runs, and when reading results.

The most important decisions in an A/B test happen before it runs

The decisions made before a test launches determine whether the p-value it produces is interpretable. Specifically:

- Define and document the primary metric before the test begins. Secondary metrics are fine to track, but only the primary metric drives the shipping decision. If you haven't committed to a primary metric in advance, any metric that reaches significance can be retroactively promoted to primary — which is the Texas Sharpshooter Fallacy in practice.

- Set your alpha threshold and required sample size before launching. Use a power calculation based on your baseline conversion rate, minimum detectable effect, and desired statistical power (typically 80%). Do not adjust these after seeing data.

- Pre-register the hypothesis. Write down what you expect to happen and why before the test runs. This is the single most effective structural defense against p-hacking.

- Decide in advance how many metrics you'll evaluate and whether you'll apply multiple testing corrections. If you're running a confirmatory test, apply FWER control. If you're running exploratory analysis, apply FDR control. Configure this at the platform level so it applies consistently.

- Choose your statistical framework before the test runs. If your team cannot realistically commit to a single look at the end, use sequential testing rather than fixed-horizon testing. Platforms that support sequential testing natively allow the choice of framework to match the actual decision context rather than defaulting to whatever the dashboard shows.

Reading results: p-value alongside confidence interval and effect size, not instead of them

When the test reaches its predetermined endpoint:

- Read the p-value alongside the confidence interval and effect size, not instead of them. A significant p-value with a wide confidence interval and a small effect size is a weak result. A significant p-value with a narrow confidence interval and a meaningful effect size is a strong one.

- Do not stop the test early because results look promising. If the test was designed for a fixed horizon, run it to that horizon. Early stopping based on observed results invalidates the p-value.

- Treat any metric that wasn't pre-registered as exploratory. Significant results on non-pre-registered metrics are hypotheses for the next test, not shipping decisions.

- Apply the business significance filter after the statistical significance filter. Ask: is this effect large enough to justify the engineering cost of shipping and maintaining the variant?

- If results are ambiguous, run a follow-up test. Do not rationalize a shipping decision from an underpowered or borderline result.

If your team cannot commit to a single look, fixed-horizon testing is the wrong tool

The most common mismatch in applied A/B testing is using fixed-horizon frequentist methods in environments where continuous monitoring is the norm. If your stakeholders will check the dashboard daily, if your engineers will stop tests early when results look good, or if your organization cannot enforce a predetermined run duration — fixed-horizon testing will produce inflated false positive rates regardless of how carefully the test was designed.

The structural solution is to adopt a framework that was built for continuous monitoring: sequential testing for frequentist inference, or Bayesian methods with a pre-committed decision rule. Neither eliminates the need for discipline, but both are designed for the actual conditions under which most product teams operate. Audit the last three A/B tests your team shipped: were the hypotheses pre-registered, were the run durations predetermined, and were the results read once at the end? If the answer to any of those is no, the p-values those tests produced are not reliable at face value — and the shipping decisions made on them deserve a second look.

What to do next: Start with the pre-test checklist. Before your next experiment launches, document the primary metric, set the alpha threshold, run a power calculation to determine required sample size, and write down the hypothesis. If your current experimentation platform doesn't make it easy to configure multiple testing corrections at the organization level or to switch between frequentist, sequential, and Bayesian frameworks, that's a platform constraint worth addressing — the best p-value practices in the world are difficult to enforce when the tooling works against them.

Related Articles

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics—free.