How to calculate statistical significance

Most A/B test mistakes don't happen in the math.

They happen before the test launches, and again when teams read the results. A significance calculation can be technically correct and still produce a wrong decision — because the sample size wasn't set in advance, because someone peeked at the data early, or because the platform automated a step that no one thought to verify. This article is for engineers, PMs, and data teams who run experiments and want to understand what the numbers actually mean — not just how to read a dashboard, but how to catch it when the dashboard is misleading you.

This guide walks through the full statistical significance calculation for A/B testing from start to finish, in the order it actually matters. Here's what you'll learn:

- What must be decided before a test launches — and why skipping it corrupts results you can't recover

- How significance is actually calculated, from conversion rates to z-scores to p-values

- The most common misreadings of statistical significance that cause teams to ship bad decisions

- How running multiple tests simultaneously breaks naive significance math — and how to fix it

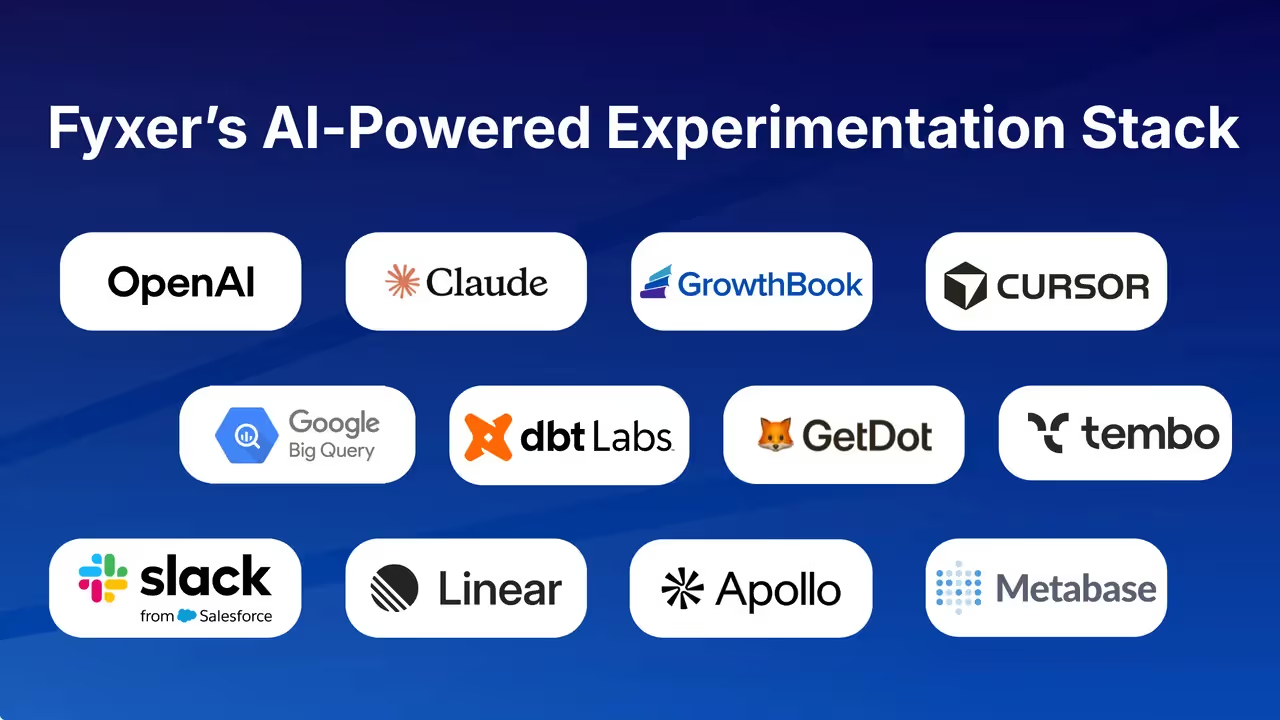

- What modern experimentation platforms like GrowthBook automate, where the gaps are, and how to audit what you can't see

The article is structured to follow the lifecycle of an experiment: pre-test planning, the calculation itself, interpretation pitfalls, multiple testing problems, and finally what platforms handle for you versus what still requires your judgment.

Why statistical significance starts before you launch the A/B test

"Poorly planned experiments waste time and lead to bad decisions." That line comes from GrowthBook's pre-test planning guide, and it understates the problem.

Poorly planned experiments don't just waste time — they produce results that look valid, get acted on, and quietly corrupt product decisions for months. The statistical significance calculation at the end of an A/B test is only trustworthy if the protocol before the test was followed. Skip the pre-test work, and you can execute every formula correctly and still reach the wrong conclusion.

This is as much a discipline problem as a math problem. The order of operations matters statistically, and the pressure to move fast — to declare a winner, ship the feature, hit the OKR — is exactly what causes teams to reverse-engineer their analysis after results start looking promising. That reversal is not a shortcut. It is, by design, a mechanism for generating false positives.

The decisions that determine whether your significance calculation is valid

GrowthBook's documented anatomy of an A/B test sequences five steps: Hypothesis → Assignment → Variations → Tracking → Results. The hypothesis is first, and that ordering is not arbitrary.

A valid hypothesis must be specific, measurable, relevant, clear, simple, and falsifiable before the experiment runs. The more variables involved, the less causality the results can imply.

Audience selection is also a pre-test decision, not a post-hoc filter. If you're testing a new user registration form, your experiment audience should be unregistered users only. Including all users adds noise from people who can't even see the variation, which reduces your ability to detect a real effect. Choosing the right audience before launch directly affects what the statistical calculation can tell you afterward.

Sample size calculation — why you need a number before you start

The ABTestGuide calculator makes the correct workflow explicit by separating two distinct modes: pre-test calculation and post-test evaluation. In pre-test mode, you input expected visitors per variation, baseline conversion rate, and expected uplift — and the calculator tells you the sample size you need before you start collecting data. That separation exists for a reason.

Sample size is derived from three decisions that must be made before the test runs: your desired confidence level, your minimum detectable effect (MDE), and your baseline conversion rate. As one practitioner put it on Hacker News: "You need to know this before you do any type of statistical test. Otherwise, you are likely to get 'positive' results that just don't mean anything."

The MDE is not something you discover during the test — it is something you commit to before it. Running an experiment until it "looks significant" and then stopping is not an analysis strategy. It is the core mechanism of p-hacking.

Setting the significance threshold — 90%, 95%, or 99%?

The confidence level is the degree of certainty required before a result is called statistically significant. The industry default is 95%, meaning you're willing to accept a 5% chance of a false positive. But the threshold must be set before launch, not adjusted after you see which direction the results are trending.

There's a real trade-off here: a lower confidence level (say, 90%) increases statistical power, meaning you can detect smaller effects with a smaller sample. A higher threshold (99%) reduces false positives but requires more data and more time.

Neither is universally correct — the right choice depends on the stakes of the decision and the cost of being wrong in either direction. What is universally wrong is choosing the threshold after peeking at results.

The Texas sharpshooter and p-hacking — what goes wrong without pre-test planning

Both failure modes trace back to the same root cause: the absence of a locked-in analysis plan before the experiment runs.

The Texas Sharpshooter fallacy takes its name from a marksman who fires at a barn, then paints a target around wherever the bullets clustered — manufacturing the appearance of accuracy after the fact. In A/B testing, this happens when teams analyze results without a pre-specified hypothesis, scanning across segments, time windows, and metrics until a pattern emerges that tells the story they wanted. The pattern is real. The inference is not.

P-hacking is the dynamic version of the same problem: repeatedly testing data using different methodologies or subsets until a statistically significant result appears, even when the observed effect is due to chance. The danger is that it works.

You will find significance if you look long enough and flexibly enough — which is precisely why it produces conclusions that don't replicate and A/B test "wins" that don't translate into real user acquisition gains.

The fix is not more sophisticated math. It is committing to the hypothesis, the metric, the audience, the sample size, and the significance threshold before a single user is assigned to a variation. The statistical significance calculation at the end of the test is only as valid as the discipline that preceded it.

How statistical significance is actually calculated in an A/B test

Every experimentation platform produces a significance result automatically. You enter your conversion counts, click a button, and a p-value appears. Walking through the formula manually isn't an academic exercise — it's the prerequisite for knowing whether your platform is doing it correctly.

A number you can't trace back to its inputs is a number you can't audit, and when results look surprising, or when a stakeholder challenges your conclusion, "the dashboard said so" is not a defensible answer. Understanding the formula chain that produces a p-value is what separates practitioners who can evaluate platform outputs from those who have to trust them blindly.

The raw inputs: observed rates and what they actually represent

The calculation starts with the simplest possible inputs: how many visitors saw each variant, and how many of them converted.

- CR_A = Conversions_A / Visitors_A

- CR_B = Conversions_B / Visitors_B

These two numbers represent the observed effect — the raw difference the test is trying to determine is real versus random noise. To make this concrete: if Variant A converts at 1.00% and Variant B at 1.14%, that 0.14 percentage point absolute difference (14% relative uplift) is what the rest of the math is trying to evaluate.

What matters here is metric type. A proportion metric — did the user convert, yes or no — uses a binomial variance formula. A mean metric, like average revenue per user, uses standard sample variance. A ratio metric, like bounce rate calculated as bounced sessions divided by total sessions, requires a more complex approach called the delta method because the unit of analysis differs from the unit of randomization.

The delta method is a statistical technique for estimating variance when your metric is a ratio — essentially, it accounts for the fact that both the numerator and denominator vary across users, which makes the uncertainty calculation more complex than for a simple conversion rate. Platforms like GrowthBook select the appropriate variance formula automatically based on how the metric is defined, which is one of the places where platform math is genuinely more reliable than naive manual calculation.

Quantifying uncertainty: how sample size shapes reliability

Once you have conversion rates, the next step is quantifying how much each rate would vary if you ran the experiment again with a different random sample. That's what standard error measures.

For a proportion metric:

- SE_A = √(CR_A × (1 − CR_A) / Visitors_A)

- SE_B = √(CR_B × (1 − CR_B) / Visitors_B)

The denominator is the key intuition: larger sample sizes produce smaller standard errors, which is the mathematical reason why more traffic produces more reliable results. A conversion rate of 1.00% measured across 10,000 visitors carries far less uncertainty than the same rate measured across 500 visitors.

Combining uncertainty into a single normalized score

The two individual standard errors get combined into a single measure of uncertainty for the comparison itself:

SE_diff = √(SE_A² + SE_B²)

This combined uncertainty is then used to normalize the observed difference between variants into a z-score:

Z = (CR_B − CR_A) / SE_diff

A z-score of 1.96 corresponds to the 95% confidence threshold for a two-tailed test. The z-score is what gets converted into a p-value via the normal distribution — or, more precisely, via the t-distribution when sample sizes are small to medium.

What the p-value actually measures — and what it doesn't

The p-value is the probability of observing a z-score at least as extreme as the one you calculated, assuming the null hypothesis is true — meaning assuming there is actually no difference between the variants. For the 1.00% versus 1.14% example above, the resulting p-value is 0.0157, which falls below the 0.05 threshold for a 95% confidence level, producing a statistically significant result.

One important implementation detail: GrowthBook's frequentist engine computes p-values using the t-distribution rather than the standard normal, with degrees of freedom estimated via the Welch-Satterthwaite approximation. At large sample sizes this converges to the normal distribution, so it produces equivalent results for high-traffic tests — but it's more statistically rigorous for smaller samples where the normal approximation breaks down.

In practice, this means significance calculations are more reliable for smaller experiments than a simple z-test would be — the t-distribution produces wider, more honest confidence intervals when sample sizes are small, which reduces the risk of calling a result significant when it isn't.

What the p-value does not mean: it is not the probability that your result is true, or that the variant is genuinely better. That misinterpretation is covered in the next section, but it's worth flagging here because the formula itself makes no claim about the probability of the hypothesis — only about the probability of the data given the null.

When the normal approximation breaks down

For large samples with proportion metrics, the z-test is standard and the normal approximation holds. For small to medium samples, the t-distribution is more appropriate — and using it by default across all metric types is a better choice than a pure z-test for most real-world experiment sizes.

The practical guardrail worth knowing: ABTestGuide's calculator displays an explicit low-data warning when a result is based on 20 conversions or fewer, even if the p-value crosses the significance threshold. This warning exists because the normal approximation becomes unreliable at very small sample sizes, and a technically significant result built on 15 conversions should be treated with serious skepticism regardless of what the formula produces. Understanding the formula chain is precisely what allows you to recognize when a result is mathematically valid but practically meaningless.

The most dangerous misunderstandings about statistical significance in A/B testing

The math behind statistical significance is not especially complicated. The interpretation is where experiments go to die.

Teams run the calculations correctly, read the output wrong, and ship decisions that feel rigorous but are statistically indefensible. These aren't edge cases — they're the norm, and they cost real money.

Why checking results early inflates your false positive rate

In 2014, a Hacker News thread surfaced a pattern that practitioners had been quietly frustrated about for years: A/B test wins that weren't translating into actual improvements in user acquisition. The diagnosis wasn't bad products or bad hypotheses. It was early stopping.

Teams were checking their dashboards, seeing significance, and calling the test — before reaching the sample sizes they'd originally planned for.

This is called the peeking problem, and the math is unforgiving. If you check your results five times during a test, your actual false positive rate climbs from the intended 5% to over 14%. With continuous peeking, it can exceed 40%. Statistician Evan Miller demonstrated this with a simulation: he generated random data with no real difference between groups, then "peeked" 100 times. Despite the complete absence of a true effect, the test showed p < 0.05 at some point 40.1% of the time.

The platform design problem is real here. Any dashboard that shows a live p-value or a confidence percentage creates the temptation to stop early. The calculation on the screen may be perfectly correct — and still lead to a wrong decision, because the threshold was designed for a fixed sample size, not an ongoing series of looks.

Sequential testing methods exist precisely to address this, allowing valid interim checks without inflating false positive rates. But most teams aren't using them, and most dashboards don't make the distinction obvious.

81% confidence is not almost 95%

A result at 81% confidence is not "almost" statistically significant at 95%. This framing — "we're close, let's just call it" — treats confidence as a linear scale when it isn't.

The gap between 81% and 95% represents a fundamentally different probability of drawing a false conclusion. Rounding up to significance under organizational pressure is not a statistical judgment. It's a business judgment dressed up as one, and the two should not be confused.

A significant result can still be wrong to ship

Statistical significance and practical significance are separate questions, and answering one does not answer the other. A conversion rate improvement can be statistically significant — genuinely, mathematically real — and still not be worth shipping.

If the effect size is tiny and the engineering cost is high, or if the UX tradeoff affects other metrics, significance alone tells you nothing about whether to act. Before any result gets shipped, two questions need answers: Is this real? And does it matter enough to act on? Most teams only ask the first one.

The compounding false positive problem

This is where scale turns a manageable error rate into an operational crisis. At a 95% confidence threshold, running a test with a single metric gives you a 10% chance of a false positive — not 5%, because the default implementation of two-tailed testing splits the alpha across both sides. Add a second unrelated metric and the probability of at least one false positive climbs to 19%. Five metrics: 41%. Twenty metrics: approximately 64%, assuming metric independence.

That last caveat matters. In digital products, metrics are rarely independent — page views correlates with funnel starts, which correlates with purchases. The true false positive probability is harder to calculate, but the direction is clear: more metrics means more noise masquerading as signal.

This is also the mechanism behind p-hacking. An analyst who adds metrics to a test until something turns green isn't discovering real effects — they're manufacturing false positives through repeated testing. It can happen unconsciously, which makes it more dangerous than deliberate fraud.

The fix is to specify which metrics matter before the test runs, not after the results come in. GrowthBook's documentation is unusually transparent about this math, publishing the specific compounding percentages in its A/A testing guidance — which is worth reading before trusting any live experiment's output.

Statistical significance is a threshold, not a verdict. Treating it as one is the most expensive mistake in experimentation.

How running many tests simultaneously breaks naive statistical significance calculations

The 64% false positive rate from twenty metrics isn't just a statistical curiosity — it's the baseline condition for any team running experiments at scale. The problem isn't that individual significance calculations are wrong. It's that correct per-test math applied to dozens of simultaneous tests produces a per-program false positive rate that makes the per-test threshold meaningless.

Why twenty metrics at 5% alpha produces a 64% false positive rate

The core mechanism is straightforward. A single hypothesis test at α=0.05 has a 5% false positive rate by definition — that's what the threshold means. But when you run multiple independent tests, the probability that none of them produce a false positive is (0.95)^n, where n is the number of tests. For 20 tests, that's (0.95)^20 ≈ 0.36, meaning there's a 64% chance at least one test flags as significant by chance alone.

The multiplication happens faster than most teams realize. Ten experiments running simultaneously, each with two variations and ten metrics, equals 100 simultaneous hypothesis tests. At that scale, the naive per-test false positive rate becomes almost meaningless as a quality signal.

One important caveat: the 64% figure assumes metric independence. In real digital products, metrics are often correlated — page views relate to funnel starts, registration events relate to purchase events. Correlated metrics change the exact probability, but they don't eliminate the exposure. The problem is structural, not just mathematical.

FWER vs. FDR: two frameworks for controlling the problem

Two main correction frameworks exist, and they answer different questions.

Family-Wise Error Rate (FWER) controls the probability of any false positive occurring across all tests. Formally, FWER = Pr(V ≥ 1), where V is the number of false positive significant results. In plain terms: if you run 20 tests and FWER is controlled at 5%, there is at most a 5% chance that any of those 20 results is a false positive.

The Holm-Bonferroni method controls FWER and is less conservative than simple Bonferroni — which just multiplies each p-value by the total number of tests — while providing the same guarantee. The trade-off is complexity: Holm-Bonferroni is a step-down procedure, and confidence intervals can't be adjusted in a directly analogous way.

False Discovery Rate (FDR) controls the proportion of significant results that are false. Formally, FDR = E[V/R], where R is the total number of significant results. In plain terms: if you get 20 significant results and FDR is controlled at 5%, you'd expect at most 1 of those 20 to be a false positive — but you don't know which one.

Controlling FDR at 5% means that if you get 20 significant results, on average only 1 should be a false positive. The Benjamini-Hochberg method implements this and is less strict than FWER corrections, which gives it more statistical power at the cost of a higher tolerance for individual false positives.

The practical choice depends on what you're doing. Exploratory analysis with many metrics — where you're looking for signals to investigate further — suits FDR. Confirmatory analysis where every significant result needs to be reliable suits FWER.

One important constraint: with very large numbers of tests, FWER corrections can destroy statistical power entirely, making it nearly impossible to detect real effects. At that scale, FDR is often the only workable option. Platforms like GrowthBook implement both Holm-Bonferroni and Benjamini-Hochberg as configurable options in their frequentist engine — though notably, these corrections don't apply to Bayesian mode, so teams using Bayesian statistics need to account for multiple comparisons separately.

A/A tests as a diagnostic for platform reliability

Before trusting any significance calculation from your experimentation platform, run an A/A test — an experiment where both groups receive the identical experience. No real difference exists, so any statistically significant result is a false positive by definition. This makes A/A testing the right diagnostic tool for validating platform configuration before real experiments run.

The interpretation isn't binary. GrowthBook's documentation offers specific benchmarks: if 1–2 out of 10 metrics flag as significant in an A/A test, that's plausibly noise and likely not a setup problem. If 7 or more metrics flag with large effect sizes — 99.9%+ confidence — something is wrong. The 3–4 out of 10 range is genuinely ambiguous, and the right move is to check whether those metrics are correlated.

Three purchase-related metrics all flagging from a single unlucky randomization is a different situation than three unrelated metrics flagging independently.

When results are ambiguous, restart the A/A test with re-randomization. If the same metrics keep flagging across multiple runs, that's evidence of a real platform or tracking problem. If different metrics flag each time, it's consistent with random noise.

The logic is simple: if your platform produces false positives in a controlled A/A test where the correct answer is known, every significance calculation it produces for real experiments is suspect.

What modern experimentation platforms automate in A/B test significance calculations — and where the gaps are

The promise of modern experimentation platforms is that you shouldn't need to manually compute z-scores, apply Bonferroni corrections, or write SQL to detect a traffic imbalance between variants. That promise is largely kept.

But automation without transparency creates its own category of risk: decisions made on numbers you can't verify, from methods you don't fully understand, applied in configurations you may not have intentionally chosen. The right way to evaluate any experimentation platform isn't just what it automates — it's whether you can audit what it's doing on your behalf.

The statistical mechanics platforms protect you from getting wrong

A mature experimentation platform handles several statistical mechanics that manual processes routinely get wrong. It runs significance calculations correctly across Bayesian, frequentist, and sequential testing modes. It applies variance reduction through CUPED to tighten metric estimates without requiring manual covariate adjustment. It manages multiple comparison corrections to control false positive accumulation across metrics. And it detects Sample Ratio Mismatches to flag corrupted traffic splits before teams act on bad data.

Each of these corresponds to a specific, documented failure mode in manual experimentation. When a platform automates them reliably, it's genuinely protective — not just convenient.

Not all experimentation platforms implement these capabilities. Some tools that started as feature flag platforms provide limited statistical methods — sometimes just a basic z-test with no variance reduction, no multiple comparison correction, and no SRM detection — without surfacing that limitation in the UI. Understanding what your platform actually calculates is not paranoia. It is basic quality control.

One statistical framework cannot fit every experiment

Platforms that offer only one statistical framework force every experiment into the same mold regardless of context. Frequentist testing requires fixed sample sizes and pre-set stopping rules — peeking at results and stopping early invalidates the test. Sequential testing addresses this directly by allowing valid early stopping through methods that continuously adjust the significance threshold as data accumulates. Bayesian testing reframes the output entirely, expressing results as a probability that one variant is better rather than a binary significant/not-significant judgment.

Platforms that support all three modes allow teams to select the framework that fits their experiment's constraints rather than defaulting to whatever the platform happens to offer. A high-traffic e-commerce team running short-cycle tests has different needs than a B2B SaaS team with slow-moving conversion metrics. One statistical mode does not fit both.

CUPED and the variance problem

CUPED — Controlled-experiment Using Pre-Experiment Data — reduces metric variance by adjusting for pre-experiment behavior, which means experiments reach statistical significance faster with the same sample size. In practice, this is a meaningful acceleration: lower variance means narrower confidence intervals and earlier, more reliable conclusions.

Implementing CUPED manually requires pre-experiment covariate data, non-trivial statistical adjustment, and consistent application across every experiment. Most teams skip it entirely without platform support. Platforms that include CUPED and post-stratification as standard capabilities make variance reduction accessible without requiring a statistics background to implement it.

SRM detection as a data quality gate

Sample Ratio Mismatch occurs when the actual traffic split between variants doesn't match the intended split. A test configured for 50/50 that actually runs at 53/47 has a data quality problem that can invalidate the entire result — and the significance calculation will happily produce a number without flagging the issue.

SRM is more common than most teams expect, caused by bot filtering, redirect timing, SDK initialization bugs, or inconsistent user assignment.

Platforms that automate SRM detection surface this problem before teams ship a decision based on corrupted data. Manual detection requires writing and running diagnostic queries after the fact, which most teams don't do systematically.

The auditability gap

Here's where the automation argument reverses. Platforms that don't expose their underlying calculations — those that return results without allowing teams to see the SQL, the statistical method parameters, or the raw aggregates — require blind trust in a dashboard number. When a result looks surprising, or when a stakeholder pushes back, there's no path to independent verification.

GrowthBook's warehouse-native architecture addresses this directly: it queries your data warehouse directly, returns only aggregates to the platform, and exposes the full SQL so teams can reproduce any calculation independently. The explicit design goal is that any result can be confirmed by running the underlying query yourself. That's a meaningful architectural choice, not a feature checkbox — it means the platform's statistical outputs are auditable against your own data, not just trustworthy by assertion.

The contrast with non-warehouse-native platforms is concrete. When underlying data and calculations aren't visible, teams have no mechanism to distinguish a genuine effect from a platform bug, a configuration error, or a silent methodology change. A/A testing can reveal some of these problems after the fact, but it can't substitute for a platform that shows its work in the first place.

Matching your statistical method to your experiment's actual constraints

The statistical significance calculation for A/B testing is not one-size-fits-all. The right method depends on your traffic volume, your team's statistical maturity, how many metrics you're tracking, and whether you need to make interim decisions before a test completes. Getting this match wrong doesn't just produce suboptimal results — it produces results that look valid but aren't.

When to trust your platform's calculations — and when to audit them

The decision framework below is organized around the most common conditions teams actually face. Use it as a starting point, not a substitute for understanding the underlying logic.

If your team is running fewer than 5 simultaneous experiments with a single primary metric: Standard frequentist testing at 95% confidence with a pre-committed sample size is sufficient. Use your platform's default mode. Run an A/A test first to validate setup before any live experiment results are trusted.

If your team is tracking more than 5 metrics per experiment: Apply Holm-Bonferroni or Benjamini-Hochberg correction. Do not evaluate secondary metrics at the same threshold as your primary metric. The compounding false positive math makes unadjusted multi-metric evaluation unreliable at scale.

If your team peeks at results before reaching the planned sample size: Switch to sequential testing. Do not adjust your fixed-sample threshold to compensate for early looks — that adjustment doesn't work the way most teams assume, and it produces a false sense of rigor.

If your platform does not expose its underlying SQL or statistical method parameters: Run an A/A test before trusting any live experiment result. If the platform cannot explain what it's calculating, treat its outputs as unaudited. Platforms that operate as black boxes create a category of risk that no amount of statistical sophistication can compensate for.

If your team is in a high-traffic environment with fast-moving metrics: Frequentist testing with CUPED variance reduction is often the most efficient path to reliable conclusions. Lower variance means you reach significance faster without sacrificing rigor.

If your team is in a low-traffic environment with slow-moving conversion metrics: Bayesian testing is often more practical. It allows you to express results as a probability that one variant is better, which is more useful for decision-making when you can't accumulate the sample sizes that frequentist testing requires.

The pre-test commitment that makes the final number mean something

Every technique described in this article — significance thresholds, sample size calculations, multiple comparison corrections, sequential testing — is only as useful as the discipline that precedes it. The pre-test commitment is not a formality. It is the mechanism that makes the final significance number interpretable.

Before any experiment launches, the following must be locked in:

- The primary metric and any secondary metrics, specified in advance

- The minimum detectable effect the test is designed to detect

- The sample size required to detect that effect at the chosen confidence level

- The significance threshold, set before results are visible

- The stopping rule — when the test ends, regardless of what the dashboard shows

Teams that skip these steps don't run experiments. They run data collection exercises that produce numbers, and then they interpret those numbers in whatever way supports the decision they were already inclined to make. The statistical significance calculation at the end is real. The conclusion drawn from it may not be.

Modern experimentation platforms like GrowthBook automate the mechanics — the variance formulas, the p-value calculations, the SRM checks, the multiple comparison corrections. What they cannot automate is the judgment that precedes the math. That judgment is yours, and it determines whether the number the platform produces means anything at all.

Related Articles

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics—free.