CUPED Explained: What is it, how does it work, and why does it matter

Experiments don't fail because your hypothesis was wrong — they fail because the noise in your data drowns out the signal before you collect enough users to tell the difference.

That's the real reason tests drag on for weeks, results hover just outside significance, and someone eventually suggests shipping anyway. CUPED is the technique that attacks this problem directly: instead of waiting for more data, it strips out the predictable noise before it inflates your sample size requirements.

This post is for engineers, PMs, and data teams who run A/B tests and want to move faster without sacrificing statistical rigor. No heavy math here — just the intuition behind how CUPED works and what it actually delivers in practice. Here's what you'll learn:

- Why standard A/B tests are structurally slower than they need to be, and what variance has to do with it

- How CUPED uses pre-experiment data to reduce noise and tighten your results — without changing your estimated lift

- What the business impact looks like when faster experiments compound across an entire program

- When CUPED delivers the most variance reduction, and where it won't help

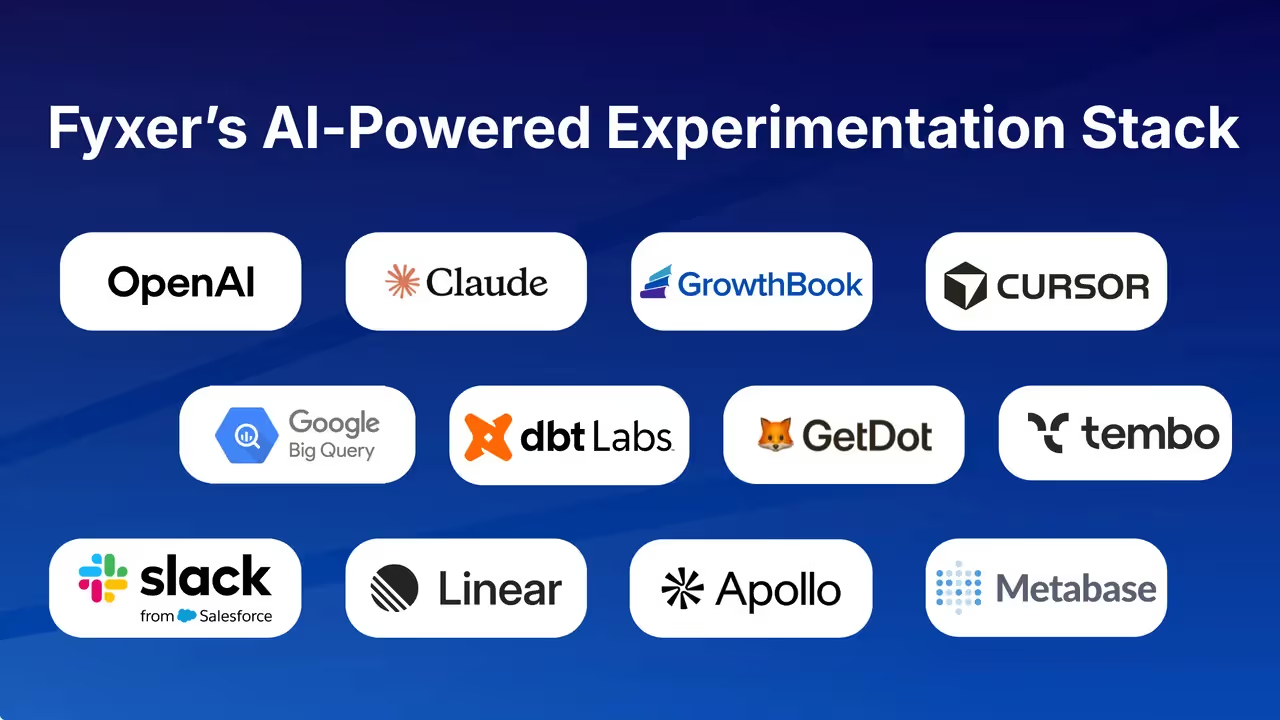

- What your team actually needs to get started, including what platforms like GrowthBook already handle for you

The article moves from problem to mechanism to impact to implementation — so if you already understand why variance is the bottleneck, you can skip ahead to how CUPED solves it.

Why standard A/B tests are slower than they need to be

Most teams running A/B tests have felt this at some point: an experiment that should be a quick call drags into its third week, the results hover tantalizingly close to significance, and eventually someone in a meeting asks whether you should just ship it anyway.

That feeling isn't bad luck or impatience. It's a structural problem baked into how standard experiments work — and it has a name: variance.

The sample size trap

Every A/B test is a signal-to-noise problem. The "signal" is the true effect of your change. The "noise" is all the random variation between users that has nothing to do with your treatment — differences in how often people visit, what they buy, what device they're on, whether they happened to land on your site during a sale.

The way you overcome noise in a standard experiment is by collecting more data. But the relationship between sample size and noise is punishing. standard error — the measure of how much your results might be bouncing around — decreases with the square root of your sample size.

That means to cut your noise in half, you don't need twice as many users. You need four times as many.

This is the sample size trap. You can't just "run the experiment a bit longer" as a neutral choice. Time is the direct cost you pay for variance. More variance means more users needed, which means more days or weeks before you can make a call with confidence.

Slow experiments are a throughput problem, not a statistics problem

Slow experiments aren't a statistics problem — they're a throughput problem. Every extra week an experiment runs is a week where a decision is delayed: a feature not shipped, a pricing change not validated, a hypothesis not tested so the next one can begin.

This compounds at scale. Even companies with enormous user bases — the Facebooks and Amazons of the world — face this problem. Having hundreds of millions of users doesn't eliminate variance; it just means you're running more experiments simultaneously, each one still subject to the same noise-driven waiting game.

The teams that win at experimentation aren't necessarily the ones with the most traffic. They're the ones who've figured out how to extract decisions faster from the traffic they have.

And waiting doesn't even guarantee a result. As Statsig's engineering team has noted, waiting for more samples "delays your ability to make an informed decision, and it doesn't guarantee you'll observe a statistically significant result when there is a real effect." You can run an experiment to its planned sample size and still come up empty — not because nothing happened, but because variance swamped the signal.

Why small effects make this worse

Here's the cruelest part of the variance problem: the experiments that matter most at scale are often the hardest to run.

At a company where core metrics are already well-optimized, a 0.1% improvement in conversion or revenue can represent enormous absolute value. But detecting a 0.1% lift reliably under high variance requires a massive sample — far larger than detecting a 5% lift would.

The smaller the real effect, the more noise drowns it out, and the longer you have to wait.

This is why Statsig's practitioners describe a common failure mode where results sit "just barely outside the range where it would be treated as statistically significant." The effect is real. The experiment just didn't have enough power to surface it cleanly. So the team either waits longer, ships without confidence, or kills a change that actually worked.

This isn't a niche problem. The breadth of CUPED adoption — Microsoft, Netflix, Meta, Airbnb, Booking, DoorDash, TripAdvisor — signals that variance-driven slowness is something every serious experimentation program eventually runs into. The solution isn't to run bigger experiments. It's to run smarter ones by reducing the variance before it forces you to wait.

Pre-experiment behavior is noise you already know how to remove

Every user who enters your experiment arrives with a history. Some users have been buying from you for years and spend hundreds of dollars a month. Others signed up last week and haven't converted once.

When you randomly assign these users to treatment and control, the randomization is fair — but the noise those users bring with them is enormous. And that noise is the core problem CUPED solves.

Why users arrive at experiments unequal

Randomization guarantees that, on average, treatment and control groups will be balanced. But "on average" is doing a lot of work. In any given experiment, high-spending users will land slightly more in one group than the other, just by chance.

Because individual spending is highly correlated from one period to the next — a user who spent $400 last month is likely to spend $400 next month regardless of what experiment they're in — these random imbalances create real noise in your results.

This isn't a flaw in your randomization. It's just the natural heterogeneity of users. The problem is that a standard A/B test has no way to distinguish this background noise from the effect of your treatment. It sees all of it as variance, and variance is what forces you to wait for larger and larger samples before you can trust your results.

Subtracting what you already know to isolate the treatment signal

CUPED's insight is straightforward: if you can predict some of a user's post-experiment behavior from their pre-experiment behavior, you can subtract that predictable part before comparing groups. What's left is a cleaner signal — the part of each user's outcome that isn't explained by who they already were when they entered the experiment.

In practice, this means taking each user's post-experiment outcome and adjusting it based on their pre-experiment behavior. The adjustment is scaled by how strongly pre-experiment behavior predicts post-experiment behavior — the stronger that relationship, the more noise gets removed.

When you compare these adjusted outcomes across treatment and control, the pre-existing baseline differences have been stripped out. You're comparing apples to apples in a way that a standard test never quite achieves.

The pre-experiment data used for this adjustment is called the covariate. It must be measured before the experiment begins — it can't be influenced by the treatment in any way. The most natural choice, and the most commonly used one, is the metric's own pre-period value.

If you're measuring post-experiment revenue, you use pre-experiment revenue as the covariate. Past behavior is typically the strongest predictor of future behavior, which is exactly what makes this work.

What "variance reduction" actually means in practice

It's worth being precise about what CUPED changes and what it doesn't. CUPED does not change your estimated lift. If your treatment produces an 8% revenue increase, CUPED will still show an 8% revenue increase. What changes is the uncertainty around that estimate — the width of the confidence interval.

Think of it as narrowing the error bars on your result. A tighter distribution around the mean gives your test more statistical power — enough to reach significance with fewer users, or to reach it faster with the same users. The effect size stays the same; the noise around it shrinks.

The magnitude of that shrinkage depends entirely on how correlated pre- and post-experiment behavior actually are. For high-frequency engagement metrics where user behavior is consistent over time, the gains can be substantial.

Netflix reported roughly 40% variance reduction for some key engagement metrics. Microsoft found that for one product team, CUPED was equivalent to adding 20% more traffic to their analysis. These aren't theoretical numbers — they represent real experiments running faster and reaching conclusions sooner.

What pre-experiment data CUPED actually uses

The covariate doesn't have to be the metric's own pre-period value — user demographics, past engagement on other dimensions, or other behavioral signals can all work. But the metric's own pre-period value is the standard approach because it captures the most relevant history and tends to have the highest correlation with future outcomes.

GrowthBook's implementation uses the metric itself from the pre-exposure period as the default covariate for each metric being analyzed. This keeps things modular: the CUPED adjustment for revenue is calculated independently from the adjustment for clicks, so adding a new metric to an experiment doesn't disturb the adjustments already in place for others.

The key constraint is timing. The covariate must be fully determined before the experiment starts. Any data collected after exposure begins is potentially contaminated by the treatment — and a contaminated covariate defeats the entire purpose.

The compounding business impact: smaller samples, faster decisions, more experiments

Statistical efficiency is nice in theory. But what does a 40% variance reduction actually mean for a product team trying to ship faster? The answer is more concrete than most people expect — and it compounds in ways that go well beyond any single experiment.

From variance reduction to shorter runtimes

The mechanical link between CUPED and speed runs through sample size. Every experiment needs a minimum number of users before results are trustworthy, and that minimum is driven by two variables: the smallest effect you want to detect, and the variance in your metric. CUPED attacks variance directly.

That matters because sample size is almost always a time problem. As Statsig puts it, sample size is "usually proportional to the enrollment window of your experiment." Waiting for more users means waiting more calendar days. For teams outside FAANG-scale traffic, that wait is often brutal — a standard experiment can easily take over a month to collect sufficient data, and sometimes several months.

The Optimizely example is worth anchoring here: a 41% variance reduction turned a non-significant result (p=0.09) into a significant one (p=0.03) with the exact same sample already collected.

That's zero additional wait time to reach a decision — the data was already there, the variance reduction just made it readable. The directional point generalizes: less variance means shorter experiments, and shorter experiments mean faster decisions.

What running more experiments actually compounds into

The real payoff isn't any single experiment finishing a few weeks earlier. It's what happens when your entire experimentation program runs at higher throughput over a year or more.

Floward, a flower and gifting platform operating across nine markets, ran over 200 live experiments across web, iOS, and Android within nine months of migrating to GrowthBook. The result wasn't just more experiments — it was double-digit year-over-year sales revenue growth.

Experiment setup time dropped from three days to under 30 minutes, and the team moved from weekly reports to daily monitoring. That's a fundamentally different relationship with data.

Breeze Airways offers a similar data point: the airline doubled its testing throughput without adding headcount and unlocked over $1 million in incremental monthly revenue from experiments. These outcomes aren't attributable to CUPED alone — they reflect the broader infrastructure of a high-velocity experimentation program.

But CUPED is one of the core mechanisms that makes high velocity achievable, because it removes the sample size ceiling that forces teams to slow down.

As GrowthBook's documentation puts it: "Each experiment may not have a large effect on your metrics, but many experiments might." Individual wins are small. Cumulative wins across hundreds of experiments are where business impact actually lives.

When experiments get cheaper, teams stop treating them as high-stakes bets

There's a cultural shift that happens when experiments get faster, and it's worth naming directly. When an experiment takes three months, it becomes a high-stakes bet — teams over-invest in hypothesis refinement, under-invest in iteration, and treat inconclusive results as failures. When an experiment takes two weeks, it becomes a cheap question.

Teams ask more of them, tolerate more ambiguity, and learn faster.

Floward's experience illustrates this concretely. Product and commercial teams moved to self-serve reporting, removing data scientists as bottlenecks. Experimentation became a daily discipline rather than a quarterly project. As their data scientist Eslam Samy put it: "GrowthBook lets us build experiments exactly how we want."

That's the compounding advantage CUPED enables — not just faster individual experiments, but a team that runs more experiments, learns more per quarter, and builds institutional knowledge that makes every future hypothesis sharper. The statistical efficiency is real. The organizational payoff is what makes it worth implementing.

When CUPED delivers the most lift (and when it doesn't)

CUPED is not a universal variance-reduction machine. Its power scales directly with one thing: how well pre-experiment behavior predicts post-experiment behavior.

When that correlation is strong, the gains are real — the Netflix and Microsoft numbers cited earlier reflect conditions where that correlation held. When it's weak, CUPED has little to subtract, and variance reduction approaches zero.

Understanding where that line falls is what separates teams that get meaningful lift from CUPED and teams that implement it and wonder why nothing changed.

The correlation requirement — what actually drives variance reduction

The core mechanism of CUPED is adjustment: it uses what you already know about a user's behavior before the experiment — the covariate (the pre-experiment behavioral signal used to make the adjustment) — to remove predictable noise from the outcome measurement.

The more predictable that pre-experiment behavior is, the more noise gets removed, and the tighter your metric distribution becomes. If pre-experiment behavior doesn't reliably predict what a user will do during the experiment, CUPED has nothing meaningful to adjust against — and you're left with roughly the same variance you started with.

This means the magnitude of variance reduction is not something you can assume in advance. It depends on your specific metric, your user population, and how much behavioral history you have. The Netflix and Microsoft numbers are real, but they reflect conditions where correlation was strong. Your mileage will vary, and it should vary — that's the point.

Where CUPED shines — high-frequency engagement metrics

CUPED works best when users generate the metric you're measuring frequently. Session counts, click-through rates, page views, daily active usage, revenue for repeat purchasers — these are the metrics where CUPED earns its reputation.

The reason is straightforward: frequent behavior creates a stable pre-experiment baseline. A user who visited your product 15 times in the two weeks before an experiment is very likely to visit frequently during it too. That predictability gives CUPED a reliable signal to adjust against.

Rare-event metrics are a different story. One-time purchases, account cancellations, infrequent conversions — these produce sparse pre-experiment data, which means weak correlation, which means minimal variance reduction.

As GrowthBook's documentation puts it directly: CUPED "tends to be very powerful for metrics that are frequently produced by users (e.g. engagement measures), but can be less powerful if your metric is rare." If your primary success metric is a low-frequency event, CUPED may not move the needle enough to justify the implementation overhead.

The new-user problem — when there's no history to use

The structural limitation of CUPED is simple: it requires pre-experiment behavioral data. New users don't have any. If you're running an experiment that targets first-time visitors, recently acquired users, or any cohort that hasn't yet generated meaningful activity in your product, CUPED cannot adjust for their baselines — because there are no baselines to use.

This isn't a flaw in the method; it's a boundary condition. Warehouse-native experimentation platforms typically allow teams to disable CUPED for specific metrics where pre-experiment values are never collected, which is a practical safeguard against applying the technique where it won't help.

Before enabling CUPED on a metric, it's worth asking: do the users in this experiment have enough history for pre-experiment behavior to be meaningful?

Three requirements that determine whether CUPED can function at all

Three practical requirements determine whether CUPED can function at all. First, you need a pre-experiment data window — GrowthBook defaults to 14 days of pre-exposure data, customizable at the organization, metric, or experiment level.

For low-frequency metrics, a longer window helps capture enough behavioral signal to be useful; for high-frequency metrics, recent behavior tends to be more predictive, so a shorter window may actually perform better while also being more query-efficient.

Second, the covariate you use must be unaffected by the treatment. This is a non-negotiable statistical requirement. Using a metric that could itself be influenced by the experiment introduces bias rather than reducing variance. The safest and most common choice — and the one GrowthBook uses by default — is the metric itself from the pre-exposure period.

Third, be aware that not every metric type is compatible. Quantile metrics, certain ratio metrics, and metrics sourced from some data pipelines may fall outside what a given implementation supports. Knowing your metric's characteristics before enabling CUPED saves you from a false sense of coverage.

The infrastructure CUPED actually requires is probably already there

CUPED has been around since 2013, when researchers at Microsoft — Deng, Xu, Kohavi, and Walker — published the original paper. In the decade-plus since, it's moved from academic research into production infrastructure at Netflix, Meta, Airbnb, Booking.com, DoorDash, and Faire.

That lineage matters for one practical reason: if you're evaluating whether CUPED is worth implementing, the answer is almost certainly yes, and the implementation risk is far lower than you might assume.

Pre-experiment behavioral data: the one non-negotiable input

The core requirement is straightforward: pre-experiment behavioral data for the same users who will be enrolled in your experiment, stored somewhere queryable at analysis time. The covariate CUPED uses is typically the metric itself — revenue, engagement, sessions — measured before the experiment starts. If you're already tracking those metrics in a data warehouse, you likely have what you need.

The critical word is "queryable." It's not enough for the data to exist at ingestion; your analysis pipeline needs to be able to reach back to pre-experiment windows when it's time to compute results.

This is where GrowthBook's warehouse-native approach has a natural advantage — when your experiment analysis runs directly against your data warehouse, connecting pre- and post-experiment periods is a query, not an integration project.

The Floward team, running GrowthBook against AWS Redshift, is a concrete example: experiment setup time dropped from three days to under 30 minutes precisely because the data pipeline was already in place.

The boundary conditions — new users without behavioral history and low-frequency metrics — are covered in detail in the section on when CUPED delivers lift. For high-frequency engagement and revenue metrics on returning users, the data requirements are almost always already met.

You probably don't need to build this from scratch

The most reassuring thing about CUPED in 2025 is that the hard statistical work is already done — and it's already packaged into platforms teams are likely already using or evaluating. The technique has moved from a research paper into standard experimentation infrastructure, which means teams don't need to hire a statistician to implement it from scratch.

Statsig describes CUPED as "one of the most powerful algorithmic tools for increasing the speed and accuracy of experimentation programs" — and that framing reflects where the industry has landed. This isn't a niche optimization for teams running thousands of experiments a week. It's a baseline capability that meaningfully improves results for any team running experiments on returning users.

How post-stratification extends CUPED beyond a single covariate

Standard CUPED uses one pre-experiment signal per metric — typically the metric's own pre-period value. GrowthBook's CUPEDps goes a step further, adding stratification across user dimensions (device type, geography, user segment) to capture additional variance that the pre-period metric alone doesn't explain.

There's a practical reason this matters for teams running multiple metrics in the same experiment. If you adjust for several covariates simultaneously, adding a new metric to an experiment can shift the results for metrics you've already analyzed — which creates the uncomfortable situation where your revenue result changes the moment you add a click-through metric to the same test.

CUPEDps sidesteps this by processing each metric independently, so your results stay stable regardless of what else is in the experiment. It's a concrete example of what "built-in CUPED" actually means: not just a checkbox, but an implementation with specific design tradeoffs that matter at scale.

Variance is the bottleneck. CUPED is the lever. Here's where to start.

The honest precondition: when CUPED earns its setup cost and when it doesn't

CUPED is worth enabling when two conditions are true: your experiment includes returning users who have generated meaningful behavioral history, and your primary metrics are measured frequently enough to produce a stable pre-experiment baseline. When both conditions hold, the variance reduction is real and the speed gains compound across your entire program.

When those conditions don't hold — new user experiments, recently launched products, low-frequency conversion metrics — CUPED has little to work with. The technique won't hurt your results in those cases, but it won't help either.

For those cohorts, the more productive investments are sequential testing (which lets you make decisions earlier without inflating false positive rates) and stratified randomization (which balances groups on known dimensions at assignment time rather than adjusting for them at analysis time). Both are worth understanding as complements to CUPED rather than replacements.

Confirm the data exists, then let the platform do the statistical work

The order of operations for getting CUPED working is simpler than most teams expect. Start by confirming that your data warehouse contains pre-experiment behavioral data for the users you're targeting — at least 14 days of history on the metrics you plan to analyze. If that data exists and is queryable, the hard part is already done.

From there, verify that your experimentation platform supports CUPED natively. If it does, enabling it is typically a configuration choice, not an engineering project. Platforms with warehouse-native architectures handle the pre-period lookback, the covariate calculation, and the adjusted variance estimation automatically — the statistical machinery runs in the background without requiring manual implementation.

Enable CUPED on your highest-frequency metrics first. Those are the metrics where pre-experiment behavior is most predictive, which means the variance reduction will be most visible.

Run your next experiment with CUPED enabled and compare the confidence interval width to your previous results on the same metric. That comparison is your proof of concept.

What to do next:

Start with one question: do the users in your highest-priority experiment have at least 14 days of pre-experiment behavioral history in your warehouse? If yes, CUPED can function. If your platform supports it natively — GrowthBook enables it automatically for qualifying metrics — there is no implementation work required.

Enable it, run your next experiment, and compare the confidence interval width to your previous results on the same metric. That comparison is your proof of concept.

If your users lack pre-experiment history (new user experiments, recently launched products), CUPED is not the right tool for that cohort. Focus variance reduction efforts elsewhere — sequential testing and stratified randomization are the relevant alternatives, and both are worth understanding as complements to CUPED rather than replacements.

The order of operations is: confirm the data exists, verify your platform supports CUPED, enable it on your highest-frequency metrics first, and let the statistical work happen automatically. The infrastructure is probably already there. The only question is whether you've turned it on.

Related Articles

Ready to ship faster?

No credit card required. Start with feature flags, experimentation, and product analytics—free.